In case you haven’t been paying attention recently, one of the hottest topics in the tech industry is the concept of “the edge.” Virtually every major tech company has been talking about their strategic approach to this new method of computing recently, and the announcements are bound to keep coming.

Standalone PCs are an example of edge devices, but when most companies talk about the edge, they really mean things like drones, smart home gadgets, sensor-based industrial devices, autonomous cars, and so on. While these devices can—and often do— connect to the Internet, they can also compute independently, courtesy of built-in x86 processors or Arm-based microcontrollers, and embedded software.

At Microsoft’s Build developer conference in Seattle, the company had a particularly strong focus on what they term the “intelligent edge” and touted it as one of the next major revolutions in computing. The “intelligent” moniker stems from the use of AI, machine learning and other advanced computing concepts in these edge devices, bringing a whole new level of capability—and attention—to them.

As appealing as the concept of intelligent edge computing may be, however, there have been some real challenges in enabling the potential of these new devices. The biggest issue is around creating software to run on the often novel architectures used inside of them. To that end, Microsoft has been making a great deal of effort both on the platform side, as well as the application development side.

Because there are a wide range of different devices with various levels of sophistication and diverse amounts of hardware, Microsoft now has several platform choices, including Windows 10 IoT Core, Windows 10 IoT Enterprise, and Azure IoT Edge Runtime, which the company is now open-sourcing. The Azure Runtime offering, in particular, is ideally suited for the enormous array of smart products appearing everywhere from our homes to hospitals to factories, farms and more.

Technically, the Runtime is collection of programs that runs on top of the various types of Linux, Windows or other embedded operating systems found in these smart devices, hence the name. What it does is create a common platform for which applications can be created. Without the consistent capabilities enabled by the Azure IoT Edge Runtime, developers would have to create new or different versions of their applications for each potential hardware/software combination—an impossible task.

In addition to this common base, Microsoft’s Azure IoT Edge Runtime leverages the same cloud-based computing platform offered by “regular” Azure that the company uses in its cloud computing offerings. This is very important for developers because it means they can use the same development tools and methodologies that they use for cloud-based programming models, including containers, to create software for these intelligent edge devices. This, in turn, makes it much easier for companies to build edge applications without needing people with entirely new types of programming skills.

Microsoft used their Azure platform approach to build and release a set of AI-based computer vision processing tools called Custom Vision that can leverage cameras and Qualcomm-based image processing silicon on edge devices. Part of what Microsoft calls Azure Cognitive Services, Custom Vision essentially brings “eyes” to the edge, letting companies build applications that react to visual information that these smart edge devices see—without needing a connection to the cloud.

A great example of this showed off Microsoft’s new partnership with DJI, the world’s largest drone maker. Using a DJI drone running Custom Vision on Azure Edge IoT Runtime, the two companies showed how you could create an app that would be capable of seeing and visually annotating (in real time) anomalies or other faults on a pipe that was being inspected by a drone. Microsoft is also working with DJI to build a Software Development Kit (SDK) for Windows 10 devices that allows for the creation of flight control and real-time vision or sensor-based applications for DJI drones.

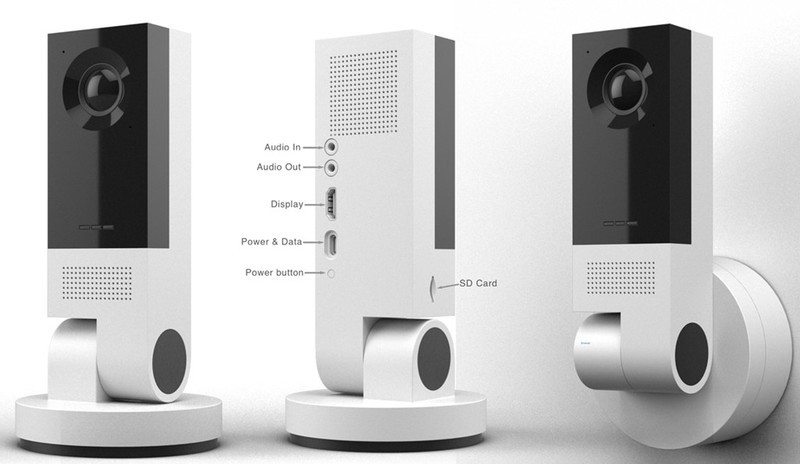

With Qualcomm, Microsoft announced a vision AI developer kit that uses Azure Machine Learning services to create vision-aware applications for edge devices that can be accelerated by Qualcomm silicon and the Qualcomm Vision Intelligence Platform and Qualcomm AI Engine software tools that they have created. In practical terms, this means that developers could create applications such as smart home visual doorbells or security cameras equipped with certain Qualcomm chips to recognize specific objects, or individuals, and react appropriately—such as providing security notifications only if a person isn’t “known” to the household, and so on.

What’s particularly intriguing about this example, is that it’s one of the first of what will likely be many AI edge applications that can take advantage of the unique characteristics of certain types of silicon. Look for many more accelerated AI edge applications to come.

The potential opportunities with intelligent edge computing are already enormous, and that’s why there is now tremendous excitement within the tech industry about how these technologies can be applied. There’s still several hurdles ahead and a fair amount of work involved, but real progress is being made. As Microsoft demonstrated, we’re starting to see some critical strategic steps towards simplification on what can be a very complex topic. As a result, it shouldn’t be long before a much broader range of developers can start leveraging capabilities such as vision on the edge. When they do, the kinds of applications and services we can enjoy are going to be amazing.

Great information shared.. really enjoyed reading this post thank you author for sharing this post .. appreciated

There is definately a lot to find out about this subject. I like all the points you made

Greetings! Very helpful advice in this particular article! It is the little changes which will make the most important changes. Thanks a lot for sharing!

Excellent article! We will be linking to this particularly great article on our website. Keep up the good writing.

prescription drugs without doctor approval: prescription meds without the prescriptions – real viagra without a doctor prescription

Hello my loved one! I want to say that this post is amazing, nice written and include almost all significant infos.

I would like to see extra posts like this .

Appreciation to my father who informed me regarding this weblog, this blog is in fact

amazing.

As the admin of this web site is working, no question very soon it will

be famous, due to its feature contents.

http://mexicopharm.shop/# mexico pharmacies prescription drugs

mexican pharmacy without prescription: prescription drugs without prior prescription – buy prescription drugs online without

amoxicillin 500mg cost: cheap amoxicillin 500mg – amoxicillin buy no prescription

legal to buy prescription drugs without prescription: levitra without a doctor prescription – viagra without a prescription

I wanted to thank you for this very good read!!

I absolutely enjoyed every bit of it. I have got you

book-marked to look at new things you post…

https://canadapharm.top/# canada drugs online review

It’s remarkable to pay a visit this site and reading the views of all colleagues regarding this paragraph, while I am

also zealous of getting knowledge.

https://canadapharm.top/# safe canadian pharmacies

https://sildenafil.win/# sildenafil citrate over the counter

https://kamagra.team/# Kamagra 100mg price

Kamagra 100mg price Kamagra 100mg price Kamagra Oral Jelly

Levitra 20 mg for sale: Buy Levitra 20mg online – Levitra 20 mg for sale

http://levitra.icu/# Vardenafil online prescription

Heya terrific website! Does running a blog similar to this

require a great deal of work? I have very little expertise in coding however I had been hoping to

start my own blog soon. Anyhow, if you have any recommendations or tips for new blog owners please share.

I know this is off topic nevertheless I just wanted to ask.

Appreciate it!

ed medications top erection pills best treatment for ed

sildenafil 100 mg: viagra sildenafil citrate – sildenafil price comparison

Whoa! This blog looks just like my old one!

It’s on a totally different topic but it has pretty much the same layout and design. Excellent choice of

colors!

https://levitra.icu/# Cheap Levitra online

Kamagra Oral Jelly: buy Kamagra – Kamagra 100mg

Having read this I thought it was rather enlightening. I appreciate

you finding the time and effort to put this information together.

I once again find myself personally spending way too

much time both reading and commenting. But so what, it was

still worthwhile!

tadalafil 2.5 mg tablets tadalafil online in india buy tadalafil 5mg online

https://edpills.monster/# cheap erectile dysfunction pills online

http://levitra.icu/# Levitra 10 mg best price

the best ed pill natural ed remedies medication for ed

cheapest ed pills: ed treatment drugs – best medication for ed

ed medications online: treatment of ed – ed medications list

http://tadalafil.trade/# generic tadalafil united states

zithromax over the counter canada zithromax antibiotic cost of generic zithromax

ciprofloxacin mail online: Buy ciprofloxacin 500 mg online – antibiotics cipro

how much is lisinopril 40 mg: Over the counter lisinopril – 60 lisinopril cost

https://amoxicillin.best/# amoxicillin 500mg for sale uk

buy cipro online canada Ciprofloxacin online prescription cipro for sale

buy zithromax no prescription: zithromax antibiotic without prescription – zithromax prescription in canada

http://doxycycline.forum/# doxycycline price uk

zithromax antibiotic buy zithromax buy zithromax online

amoxicillin order online no prescription: azithromycin amoxicillin – amoxicillin discount

how to buy amoxycillin: amoxil for sale – amoxicillin 500 mg online

amoxicillin tablet 500mg can you buy amoxicillin over the counter in canada over the counter amoxicillin

order amoxicillin online no prescription: buy amoxil – amoxicillin pills 500 mg

http://lisinopril.auction/# lisinopril comparison

lisinopril without rx: Buy Lisinopril 20 mg online – prescription drug prices lisinopril

doxycline Buy Doxycycline for acne doxycycline without prescription

zithromax 500mg price in india: buy zithromax canada – zithromax buy

ciprofloxacin order online Ciprofloxacin online prescription ciprofloxacin 500mg buy online

zithromax generic price: how to get zithromax – zithromax prescription

offshore online pharmacies: Online pharmacy USA – cheap canadian cialis

pharmacies in mexico that ship to usa mexican online pharmacy mexican pharmacy

best india pharmacy: indian pharmacies safe – buy medicines online in india

http://canadiandrugs.store/# safe canadian pharmacy

safe online pharmacies in canada: trust canadian pharmacy – canada drugs online

pharmacy online canada: online meds – cheap online pharmacy

http://buydrugsonline.top/# discount online canadian pharmacy

mexican pharmaceuticals online mexican pharmacy online medication from mexico pharmacy

canada pharmacies online: order medication online – mexican online pharmacy

best 10 online pharmacies: buy drugs online – online pharmacies without prescription

paxlovid pill: Paxlovid without a doctor – п»їpaxlovid

http://clomid.club/# can i get generic clomid without insurance

wellbutrin 100mg tablets: Buy bupropion online Europe – wellbutrin prescription mexico

https://paxlovid.club/# Paxlovid buy online

buy paxlovid online: buy paxlovid – paxlovid price

https://wellbutrin.rest/# wellbutrin 450 mg

paxlovid cost without insurance https://paxlovid.club/# paxlovid

Pretty section of content. I just stumbled upon your site and in accession capital to assert

that I get in fact enjoyed account your blog posts.

Any way I’ll be subscribing to your augment

and even I achievement you access consistently rapidly.

how can i get clomid online: clomid best price – can you get generic clomid

https://paxlovid.club/# paxlovid generic

paxlovid price: Paxlovid over the counter – paxlovid generic

https://gabapentin.life/# buy cheap neurontin online

how much is wellbutrin: buy wellbutrin – 1800 mg wellbutrin

farmacia online: Farmacie che vendono Cialis senza ricetta – farmacie online autorizzate elenco

I do not know whether it’s just me or if perhaps everybody else encountering problems

with your site. It appears as if some of the written text in your content are running off the screen. Can somebody else please provide feedback

and let me know if this is happening to them as well? This could be a problem with my

browser because I’ve had this happen previously.

Appreciate it

viagra acquisto in contrassegno in italia: alternativa al viagra senza ricetta in farmacia – dove acquistare viagra in modo sicuro

farmacie online autorizzate elenco: Tadalafil prezzo – farmacie online sicure

http://avanafilit.icu/# farmacie online affidabili

farmacia online migliore: Farmacie a roma che vendono cialis senza ricetta – farmacia online miglior prezzo

Spot on with this write-up, I seriously believe that this web site needs far more attention. I’ll probably be back again to

read more, thanks for the information!

acquistare farmaci senza ricetta cialis prezzo comprare farmaci online all’estero

farmacie online autorizzate elenco: cialis prezzo – comprare farmaci online con ricetta

farmacie online affidabili: farmacia online piГ№ conveniente – comprare farmaci online con ricetta

farmacia online più conveniente: cialis prezzo – comprare farmaci online all’estero

Today, while I was at work, my cousin stole my iPad and tested to see if it can survive a 30 foot drop, just so she can be a youtube sensation. My apple ipad is now destroyed and she has 83 views. I know this is completely off topic but I had to share it with someone!

acquisto farmaci con ricetta farmacia online migliore farmacia online

farmacie online autorizzate elenco: farmacie on line spedizione gratuita – farmacia online migliore

https://avanafilit.icu/# farmacia online senza ricetta

farmacia online kamagra oral jelly acquisto farmaci con ricetta

http://kamagrait.club/# farmacie online sicure

acquistare farmaci senza ricetta: farmacia online migliore – farmacie online affidabili

comprare farmaci online all’estero kamagra gold farmacia online piГ№ conveniente

farmaci senza ricetta elenco: Avanafil farmaco – acquistare farmaci senza ricetta

farmacia online envГo gratis kamagra 100mg farmacia envГos internacionales

п»їfarmacia online: farmacia envio gratis – farmacia online barata

http://www.bestartdeals.com.au is Australia’s Trusted Online Canvas Prints Art Gallery. We offer 100 percent high quality budget wall art prints online since 2009. Get 30-70 percent OFF store wide sale, Prints starts $20, FREE Delivery Australia, NZ, USA. We do Worldwide Shipping across 50+ Countries.

sildenafil 100mg genГ©rico sildenafilo precio sildenafilo cinfa 100 mg precio farmacia

http://tadalafilo.pro/# farmacias online baratas

Thanks for helping me to achieve new concepts about computer systems. I also possess the belief that one of the best ways to maintain your notebook computer in prime condition is with a hard plastic-type case, and also shell, that suits over the top of your computer. A lot of these protective gear usually are model distinct since they are manufactured to fit perfectly across the natural housing. You can buy them directly from the seller, or from third party sources if they are for your notebook computer, however not all laptop will have a shell on the market. Once again, thanks for your tips.

farmacia envГos internacionales precio cialis en farmacia con receta farmacia online barata

farmacia online 24 horas: farmacia online envio gratis valencia – п»їfarmacia online

http://sildenafilo.store/# viagra online rГЎpida

What an insightful and meticulously-researched article! The author’s thoroughness and capability to present complicated ideas in a digestible manner is truly praiseworthy. I’m totally enthralled by the breadth of knowledge showcased in this piece. Thank you, author, for sharing your expertise with us. This article has been a game-changer!

farmacias online seguras comprar cialis original farmacia envГos internacionales

farmacia online madrid: kamagra gel – farmacias online baratas

comprar viagra en espaГ±a envio urgente comprar viagra en espana comprar viagra online en andorra

farmacia barata: kamagra gel – farmacias baratas online envГo gratis

farmacia envГos internacionales: farmacia online 24 horas – farmacia online 24 horas

farmacia online internacional: Precio Cialis 20 Mg – farmacia barata

farmacias baratas online envГo gratis kamagra oral jelly farmacia 24h

https://vardenafilo.icu/# farmacia 24h

comprar viagra en espaГ±a: comprar viagra – se puede comprar sildenafil sin receta

https://vardenafilo.icu/# farmacia online barata

sildenafilo 100mg precio espaГ±a comprar viagra contrareembolso 48 horas viagra para hombre precio farmacias

https://farmacia.best/# farmacia online internacional

pharmacie ouverte Acheter mГ©dicaments sans ordonnance sur internet п»їpharmacie en ligne

Pharmacie en ligne livraison gratuite: kamagra 100mg prix – Pharmacie en ligne sans ordonnance

farmacias online seguras: mejores farmacias online – farmacia envГos internacionales

Viagra pas cher livraison rapide france Viagra gГ©nГ©rique pas cher livraison rapide Viagra Pfizer sans ordonnance

Viagra vente libre pays: Viagra sans ordonnance 24h – Viagra en france livraison rapide

pharmacie ouverte cialis Pharmacie en ligne pas cher

comprar viagra en espaГ±a: viagra precio – viagra para mujeres

Pharmacies en ligne certifiГ©es: pharmacie en ligne – Pharmacie en ligne livraison 24h

pharmacie ouverte 24/24 kamagra en ligne Pharmacie en ligne livraison gratuite

farmacias online seguras en espaГ±a: Cialis generico – farmacia online 24 horas

acheter medicament a l etranger sans ordonnance: tadalafil sans ordonnance – Pharmacie en ligne livraison rapide

http://kamagrakaufen.top/# online apotheke preisvergleich

gГјnstige online apotheke cialis rezeptfreie kaufen versandapotheke versandkostenfrei

http://kamagrakaufen.top/# online-apotheken

http://kamagrakaufen.top/# online-apotheken

п»їonline apotheke kamagra online bestellen online-apotheken

http://viagrakaufen.store/# Viagra rezeptfreie Länder

hello!,I love your writing so so much! proportion we keep in touch more approximately your article on AOL?

I require a specialist in this space to resolve my problem.

Maybe that’s you! Looking ahead to peer you.

https://viagrakaufen.store/# Sildenafil Preis

п»їViagra kaufen viagra kaufen ohne rezept legal Viagra Preis Schwarzmarkt

http://apotheke.company/# versandapotheke versandkostenfrei

Viagra wie lange steht er viagra ohne rezept Wo kann man Viagra kaufen rezeptfrei

Sildenafil 100mg online bestellen: Viagra kaufen gГјnstig Deutschland – Viagra online kaufen legal

https://cialiskaufen.pro/# online apotheke deutschland

online apotheke preisvergleich cialis kaufen versandapotheke deutschland

http://mexicanpharmacy.cheap/# mexican border pharmacies shipping to usa

best online pharmacies in mexico buying from online mexican pharmacy mexican pharmacy

http://mexicanpharmacy.cheap/# mexican pharmaceuticals online

buying prescription drugs in mexico online mexican online pharmacies prescription drugs purple pharmacy mexico price list

https://mexicanpharmacy.cheap/# mexico drug stores pharmacies

https://mexicanpharmacy.cheap/# medicine in mexico pharmacies

buying prescription drugs in mexico purple pharmacy mexico price list buying prescription drugs in mexico online

mexican pharmacy buying prescription drugs in mexico online mexican drugstore online

http://mexicanpharmacy.cheap/# medicine in mexico pharmacies

mexican drugstore online mexican pharmaceuticals online mexican pharmaceuticals online

https://mexicanpharmacy.cheap/# pharmacies in mexico that ship to usa

http://mexicanpharmacy.cheap/# reputable mexican pharmacies online

mexican rx online mexican pharmaceuticals online mexican mail order pharmacies

medication from mexico pharmacy mexican mail order pharmacies mexican mail order pharmacies

impotence pills erectile dysfunction medicines – ed pills online edpills.tech

medication for ed male ed pills new ed pills edpills.tech

buy prescription drugs from india Online medicine order – world pharmacy india indiapharmacy.guru

http://edpills.tech/# cheap erectile dysfunction pill edpills.tech

https://canadiandrugs.tech/# pharmacy com canada canadiandrugs.tech

http://canadapharmacy.guru/# canadian pharmacies comparison canadapharmacy.guru

https://indiapharmacy.guru/# india pharmacy indiapharmacy.guru

https://indiapharmacy.guru/# Online medicine order indiapharmacy.guru

canadian pharmacy checker safe reliable canadian pharmacy canadian pharmacy oxycodone canadiandrugs.tech

top ed drugs best pills for ed – buy ed pills online edpills.tech

https://indiapharmacy.guru/# mail order pharmacy india indiapharmacy.guru

https://indiapharmacy.guru/# Online medicine order indiapharmacy.guru

ed treatment pills cheap erectile dysfunction – medicine for impotence edpills.tech

https://indiapharmacy.guru/# india online pharmacy indiapharmacy.guru

http://edpills.tech/# male ed drugs edpills.tech

india pharmacy india online pharmacy india pharmacy mail order indiapharmacy.guru

http://canadiandrugs.tech/# ed meds online canada canadiandrugs.tech

http://canadiandrugs.tech/# cheap canadian pharmacy canadiandrugs.tech

canadian pharmacy meds online canadian pharmacy reviews – canadapharmacyonline legit canadiandrugs.tech

http://indiapharmacy.guru/# pharmacy website india indiapharmacy.guru

https://indiapharmacy.guru/# pharmacy website india indiapharmacy.guru

pharmacy canadian superstore legal to buy prescription drugs from canada – canadian pharmacy ltd canadiandrugs.tech

http://canadiandrugs.tech/# canadian pharmacy sarasota canadiandrugs.tech

https://canadapharmacy.guru/# buy drugs from canada canadapharmacy.guru

best canadian pharmacy to buy from canada drugs online reviews legit canadian pharmacy online canadiandrugs.tech

https://edpills.tech/# erectile dysfunction drugs edpills.tech

http://canadiandrugs.tech/# canadian online pharmacy reviews canadiandrugs.tech

http://indiapharmacy.guru/# top 10 online pharmacy in india indiapharmacy.guru

pharmacy in canada canadian pharmacy king – canadian pharmacy scam canadiandrugs.tech

http://edpills.tech/# ed meds online edpills.tech

http://canadiandrugs.tech/# safe canadian pharmacy canadiandrugs.tech

cheapest pharmacy canada online canadian pharmacy – canadian pharmacies comparison canadiandrugs.tech

best india pharmacy indian pharmacy Online medicine home delivery indiapharmacy.guru

http://edpills.tech/# ed medication edpills.tech

https://edpills.tech/# pills for ed edpills.tech

http://canadiandrugs.tech/# reliable canadian pharmacy reviews canadiandrugs.tech

http://indiapharmacy.pro/# indian pharmacy online indiapharmacy.pro

https://edpills.tech/# best ed pills edpills.tech

canadian pharmacy online store best rated canadian pharmacy – canada pharmacy 24h canadiandrugs.tech

https://canadiandrugs.tech/# canadian pharmacy 24 canadiandrugs.tech

best canadian pharmacy canadapharmacyonline my canadian pharmacy rx canadiandrugs.tech

https://edpills.tech/# natural ed medications edpills.tech

Its such as you read my mind! You seem to understand so much approximately this, such as you wrote the guide in it or something. I feel that you could do with some p.c. to pressure the message house a bit, but other than that, that is wonderful blog. A great read. I will definitely be back.

ed drugs natural ed medications – best ed pills non prescription edpills.tech

https://edpills.tech/# cheapest ed pills edpills.tech

http://edpills.tech/# treatment of ed edpills.tech

https://edpills.tech/# medicine erectile dysfunction edpills.tech

https://canadiandrugs.tech/# online canadian pharmacy review canadiandrugs.tech

india pharmacy buy medicines online in india online pharmacy india indiapharmacy.guru

world pharmacy india top 10 online pharmacy in india – п»їlegitimate online pharmacies india indiapharmacy.guru

paxlovid for sale: Paxlovid over the counter – paxlovid for sale

http://ciprofloxacin.life/# cipro 500mg best prices

https://ciprofloxacin.life/# ciprofloxacin generic

paxlovid buy: paxlovid price – paxlovid cost without insurance

where can i get cheap clomid without a prescription: where can i buy cheap clomid price – can i get cheap clomid tablets

prednisone prices prednisone 40 mg tablet cost of prednisone tablets

get clomid no prescription: can you get clomid now – can you buy cheap clomid now

paxlovid pharmacy: paxlovid covid – Paxlovid buy online

https://prednisone.bid/# buy prednisone without prescription

prednisone 60 mg price: prednisone 40 mg – buy 40 mg prednisone

http://prednisone.bid/# prednisone medication

amoxicillin medicine over the counter: amoxicillin pills 500 mg – amoxicillin 875 mg tablet

For most up-to-date news you have to go to see web and on the

web I found this website as a most excellent site for most recent updates.

buy cheap amoxicillin: medicine amoxicillin 500 – where to buy amoxicillin 500mg without prescription

buy cipro online: buy cipro online without prescription – ciprofloxacin mail online

buy cipro online without prescription: cipro 500mg best prices – where can i buy cipro online

buying amoxicillin online: generic amoxicillin – buy amoxicillin 250mg

http://paxlovid.win/# paxlovid covid

paxlovid cost without insurance: paxlovid generic – paxlovid india

where can i buy cipro online: buy ciprofloxacin – buy cipro

can you buy generic clomid without insurance how to get generic clomid online how can i get clomid for sale

Paxlovid buy online: buy paxlovid online – paxlovid pharmacy

buy amoxicillin 500mg uk: buy amoxicillin from canada – amoxicillin 500 tablet

https://paxlovid.win/# paxlovid for sale

paxlovid covid: paxlovid – paxlovid generic

where can i buy cheap clomid online: can i purchase cheap clomid prices – where to buy cheap clomid online

https://prednisone.bid/# iv prednisone

purchase prednisone no prescription canadian online pharmacy prednisone purchase prednisone

amoxicillin 500 mg tablet price: amoxicillin 500 mg online – purchase amoxicillin online

Hi there would you mind sharing which blog platform you’re working

with? I’m looking to start my own blog soon but I’m having

a hard time selecting between BlogEngine/Wordpress/B2evolution and Drupal.

The reason I ask is because your layout seems different then most blogs and I’m looking for something unique.

P.S My apologies for getting off-topic but I had to ask!

prednisone 40 mg: prednisone daily use – 50mg prednisone tablet

paxlovid buy: paxlovid for sale – paxlovid india

buy paxlovid online Paxlovid buy online paxlovid india

http://paxlovid.win/# п»їpaxlovid

can i buy generic clomid online where can i buy generic clomid how to get clomid pills

https://clomid.site/# generic clomid pills

amoxicillin online no prescription where can i buy amoxicillin over the counter uk amoxicillin 775 mg

ciprofloxacin generic: cipro pharmacy – ciprofloxacin

order cytotec online: buy cytotec online – buy cytotec

http://zithromaxbestprice.icu/# can i buy zithromax over the counter

I?ve read several good stuff here. Definitely value bookmarking for revisiting. I wonder how much attempt you place to make any such magnificent informative site.

tamoxifen alternatives premenopausal: nolvadex 10mg – tamoxifen joint pain

tamoxifen lawsuit tamoxifen lawsuit does tamoxifen cause weight loss

https://doxycyclinebestprice.pro/# buy doxycycline 100mg

buy doxycycline monohydrate: how to buy doxycycline online – order doxycycline

https://lisinoprilbestprice.store/# where can i purchase lisinopril

buy cytotec online: Cytotec 200mcg price – buy cytotec over the counter

http://nolvadex.fun/# tamoxifenworld

nolvadex 20mg: nolvadex vs clomid – tamoxifen alternatives premenopausal

https://cytotec.icu/# buy misoprostol over the counter

how to lose weight on tamoxifen tamoxifen postmenopausal tamoxifenworld

http://lisinoprilbestprice.store/# lisinopril 12.5 mg 10 mg

buy 40 mg lisinopril: lisinopril 2.5 mg tablet – how much is 30 lisinopril

lisinopril average cost: price of lisinopril 20 mg – lisinopril from mexico

https://cytotec.icu/# buy cytotec pills

zithromax over the counter uk: zithromax 500mg – zithromax for sale cheap

can you buy zithromax online zithromax 500mg price zithromax capsules price

http://doxycyclinebestprice.pro/# doxy

tamoxifen menopause: tamoxifen and depression – tamoxifen moa

https://nolvadex.fun/# what is tamoxifen used for

http://lisinoprilbestprice.store/# buy prinivil online

zithromax 500 price: buy zithromax 1000 mg online – zithromax 500 price

world pharmacy india: Medicines from India to USA online – india online pharmacy indiapharm.llc

http://indiapharm.llc/# indian pharmacies safe indiapharm.llc

cheap canadian pharmacy online: Canada Drugs Direct – canadianpharmacy com canadapharm.life

mexico drug stores pharmacies: mexican pharmacy – mexico drug stores pharmacies mexicopharm.com

http://canadapharm.life/# legit canadian pharmacy online canadapharm.life

indian pharmacies safe: India pharmacy of the world – reputable indian online pharmacy indiapharm.llc

http://canadapharm.life/# canadianpharmacymeds com canadapharm.life

buying prescription drugs in mexico online: Medicines Mexico – mexico drug stores pharmacies mexicopharm.com

https://indiapharm.llc/# Online medicine order indiapharm.llc

п»їlegitimate online pharmacies india: indian pharmacy – reputable indian pharmacies indiapharm.llc

reliable canadian pharmacy Canadian online pharmacy legitimate canadian pharmacy online canadapharm.life

buying from online mexican pharmacy: Purple Pharmacy online ordering – pharmacies in mexico that ship to usa mexicopharm.com

http://indiapharm.llc/# buy prescription drugs from india indiapharm.llc

canadian pharmacy meds: Canadian online pharmacy – safe online pharmacies in canada canadapharm.life

https://mexicopharm.com/# mexican online pharmacies prescription drugs mexicopharm.com

indian pharmacy paypal: Online India pharmacy – top 10 pharmacies in india indiapharm.llc

https://mexicopharm.com/# mexico drug stores pharmacies mexicopharm.com

online pharmacy india: India pharmacy of the world – mail order pharmacy india indiapharm.llc

mail order pharmacy india: India pharmacy of the world – india pharmacy indiapharm.llc

http://canadapharm.life/# canadian pharmacy prices canadapharm.life

medicine in mexico pharmacies: Purple Pharmacy online ordering – buying prescription drugs in mexico mexicopharm.com

canadian pharmacy world: Canada Drugs Direct – canadian pharmacy checker canadapharm.life

india pharmacy mail order India Post sending medicines to USA п»їlegitimate online pharmacies india indiapharm.llc

https://indiapharm.llc/# online pharmacy india indiapharm.llc

http://canadapharm.life/# canadian drug stores canadapharm.life

mexico pharmacies prescription drugs: Purple Pharmacy online ordering – mexico drug stores pharmacies mexicopharm.com

buy tadalafil 5mg: Buy tadalafil online – tadalafil generic us

http://levitradelivery.pro/# Cheap Levitra online

п»їLevitra price: Cheap Levitra online – buy Levitra over the counter

http://tadalafildelivery.pro/# tadalafil 20mg no prescription

tadalafil tablets 20 mg online: 10mg tadalafil – generic tadalafil 20mg canada

https://tadalafildelivery.pro/# tadalafil 20 mg mexico

http://tadalafildelivery.pro/# best price tadalafil 20 mg

tadalafil 20mg lowest price: where to buy tadalafil in usa – tadalafil soft

erectile dysfunction pills: erection pills over the counter – best ed drug

https://sildenafildelivery.pro/# sildenafil generic otc

ed dysfunction treatment: male erection pills – ed treatments

super kamagra: kamagra oral jelly – Kamagra Oral Jelly

http://levitradelivery.pro/# Vardenafil buy online

https://kamagradelivery.pro/# Kamagra 100mg price

tadalafil 2.5 mg price: tadalafil without a doctor prescription – buy tadalafil uk

natural ed medications: best ed drugs – male erection pills

https://kamagradelivery.pro/# buy Kamagra

Buy Vardenafil 20mg: Buy generic Levitra online – Levitra tablet price

sildenafil 100 mg: Cheapest Sildenafil online – sildenafil cost uk

https://sildenafildelivery.pro/# sildenafil 100mg buy online us without a prescription

https://stromectol.guru/# ivermectin humans

https://stromectol.guru/# ivermectin 4000 mcg

can i order generic clomid pill: cheapest clomid – buy clomid without rx

https://paxlovid.guru/# п»їpaxlovid

http://amoxil.guru/# amoxicillin generic

http://clomid.auction/# get generic clomid pills

https://prednisone.auction/# prednisone 20

order amoxicillin online no prescription: buy amoxicillin over the counter – amoxicillin medicine over the counter

https://prednisone.auction/# order prednisone 10mg

http://paxlovid.guru/# Paxlovid buy online

http://stromectol.guru/# buy ivermectin canada

ivermectin 1 cream: ivermectin for sale – stromectol cost

https://paxlovid.guru/# Paxlovid over the counter

http://clomid.auction/# can i order clomid pill

Thank you for every other informative web site.

Where else may I am getting that kind of info written in such a perfect means?

I have a venture that I’m simply now operating on, and

I’ve been at the glance out for such info.

lisinopril 10 mg coupon over the counter lisinopril can i buy generic lisinopril online

http://misoprostol.shop/# buy cytotec in usa

https://lisinopril.fun/# lisinopril 2019

order propecia without prescription: buy propecia – order cheap propecia no prescription

where can i buy zithromax uk: zithromax best price – zithromax canadian pharmacy

http://lisinopril.fun/# lisinopril price without insurance

cost propecia tablets: buy propecia – propecia tablet

order cytotec online: buy cytotec online – buy misoprostol over the counter

https://lisinopril.fun/# buy zestril 20 mg online

buy cytotec in usa Misoprostol best price in pharmacy buy cytotec

https://misoprostol.shop/# buy cytotec in usa

lasix 40mg: Buy Lasix No Prescription – lasix 40mg

cytotec online: Buy Abortion Pills Online – cytotec online

http://lisinopril.fun/# buy lisinopril

order cytotec online: buy cytotec online – buy cytotec in usa

https://azithromycin.store/# generic zithromax india

propecia without rx buy propecia order generic propecia without prescription

where can i buy zithromax in canada: buy zithromax z-pak online – generic zithromax medicine

http://furosemide.pro/# lasix online

lasix 100 mg: Over The Counter Lasix – lasix 100mg

https://azithromycin.store/# generic zithromax india

generic zithromax medicine: zithromax best price – zithromax 250 price

Good day very nice blog!! Man .. Excellent .. Amazing ..

I will bookmark your web site and take the feeds additionally?

I’m satisfied to seek out a lot of helpful info here in the submit, we want develop more techniques in this regard, thanks

for sharing. . . . . .

cost of cheap propecia online: buy propecia – cheap propecia without prescription

cost cheap propecia pill Buy finasteride 1mg buying propecia without rx

https://finasteride.men/# propecia

prinivil 25mg: url lisinopril hctz prescription – cost for 2 mg lisinopril

https://azithromycin.store/# zithromax prescription in canada

buy cytotec online fast delivery: Misoprostol best price in pharmacy – buy cytotec over the counter

https://furosemide.pro/# lasix 40mg

zestril brand name: cheapest lisinopril – lisinopril 20mg pill

lisinopril 12.5 mg 10 mg lisinopril generic over the counter lisinopril 10 mg on line prescription

http://furosemide.pro/# lasix furosemide

lisinopril 15mg: buy lisinopril canada – zestril 40 mg tablet

lasix online: Over The Counter Lasix – furosemide 40 mg

https://azithromycin.store/# zithromax 250 mg

lasix generic name: furosemide 40 mg – lasix 100 mg

buy cheap propecia without a prescription Best place to buy propecia cost generic propecia without a prescription

https://azithromycin.store/# zithromax price canada

generic zithromax azithromycin: zithromax for sale 500 mg – where to get zithromax over the counter

https://farmaciaitalia.store/# farmacie online autorizzate elenco

kamagra senza ricetta in farmacia: viagra online siti sicuri – viagra naturale in farmacia senza ricetta

http://sildenafilitalia.men/# viagra 50 mg prezzo in farmacia

migliori farmacie online 2023: dove acquistare cialis online sicuro – farmacia online migliore

gel per erezione in farmacia viagra online spedizione gratuita viagra cosa serve

http://avanafilitalia.online/# top farmacia online

farmaci senza ricetta elenco: farmacie online autorizzate elenco – farmaci senza ricetta elenco

http://sildenafilitalia.men/# miglior sito dove acquistare viagra

https://sildenafilitalia.men/# alternativa al viagra senza ricetta in farmacia

https://farmaciaitalia.store/# farmacia online senza ricetta

farmaci senza ricetta elenco: Farmacie che vendono Cialis senza ricetta – farmacia online piГ№ conveniente

http://farmaciaitalia.store/# farmacie online affidabili

acquistare farmaci senza ricetta farmacia online farmacie on line spedizione gratuita

miglior sito per comprare viagra online: sildenafil 100mg prezzo – viagra originale recensioni

http://farmaciaitalia.store/# farmacia online

http://avanafilitalia.online/# farmacia online miglior prezzo

http://avanafilitalia.online/# farmacia online più conveniente

pillole per erezione in farmacia senza ricetta: viagra consegna in 24 ore pagamento alla consegna – alternativa al viagra senza ricetta in farmacia

viagra originale in 24 ore contrassegno viagra prezzo esiste il viagra generico in farmacia

https://sildenafilitalia.men/# miglior sito per comprare viagra online

farmacia online piГ№ conveniente: Cialis senza ricetta – farmacia online migliore

http://sildenafilitalia.men/# viagra naturale

farmacia online: cialis generico consegna 48 ore – farmacia online senza ricetta

farmacie online affidabili: avanafil – farmacie online autorizzate elenco

http://tadalafilitalia.pro/# farmacie online affidabili

https://farmaciaitalia.store/# acquisto farmaci con ricetta

the canadian pharmacy: canadianpharmacymeds com – canadian drugstore online

http://mexicanpharm.store/# mexico drug stores pharmacies

mexican drugstore online: mexican drugstore online – mexican mail order pharmacies

canadian pharmacy phone number: canadian online pharmacy – canada pharmacy online legit

canadian pharmacy review: canadian pharmacy ratings – canadian drug pharmacy

https://indiapharm.life/# reputable indian online pharmacy

mexico pharmacies prescription drugs: medicine in mexico pharmacies – medicine in mexico pharmacies

http://canadapharm.shop/# canadian pharmacy ratings

https://canadapharm.shop/# best canadian pharmacy online

pet meds without vet prescription canada: canadian pharmacy online ship to usa – canadian pharmacy meds reviews

Online medicine home delivery: india pharmacy – buy prescription drugs from india

trustworthy canadian pharmacy: canadian pharmacy prices – canadian pharmacy ratings

https://canadapharm.shop/# canadian pharmacy 24 com

my canadian pharmacy reviews: canadian pharmacy 1 internet online drugstore – pharmacy wholesalers canada

https://canadapharm.shop/# canadian pharmacy 365

legitimate canadian online pharmacies: certified canadian pharmacy – best canadian pharmacy to order from

canadian discount pharmacy: canadian pharmacy ratings – trustworthy canadian pharmacy

https://mexicanpharm.store/# reputable mexican pharmacies online

http://indiapharm.life/# indian pharmacies safe

canadianpharmacyworld: buy drugs from canada – reputable canadian online pharmacies

http://indiapharm.life/# indian pharmacy paypal

mail order pharmacy india: indian pharmacy online – world pharmacy india

mexican border pharmacies shipping to usa: mexico drug stores pharmacies – buying prescription drugs in mexico online

https://indiapharm.life/# online shopping pharmacy india

online canadian pharmacy review: best canadian pharmacy to order from – northern pharmacy canada

п»їlegitimate online pharmacies india: Online medicine home delivery – buy prescription drugs from india

http://mexicanpharm.store/# pharmacies in mexico that ship to usa

http://indiapharm.life/# top 10 online pharmacy in india

cheapest online pharmacy india: world pharmacy india – Online medicine home delivery

http://indiapharm.life/# online pharmacy india

п»їbest mexican online pharmacies: medication from mexico pharmacy – mexico pharmacy

https://indiapharm.life/# reputable indian pharmacies

best online pharmacies in mexico: mexico drug stores pharmacies – mexico pharmacies prescription drugs

pharmacy rx world canada: legitimate canadian mail order pharmacy – legit canadian pharmacy

https://mexicanpharm.store/# purple pharmacy mexico price list

https://canadapharm.shop/# canadian pharmacies comparison

https://cytotec.directory/# cytotec buy online usa

buy cytotec online fast delivery: cytotec abortion pill – buy misoprostol over the counter

https://cytotec.directory/# Abortion pills online

http://prednisonepharm.store/# can you buy prednisone without a prescription

get cheap clomid without a prescription: where can i get cheap clomid price – cheap clomid now

can i purchase generic clomid online how to get clomid without prescription order cheap clomid now

https://prednisonepharm.store/# can i buy prednisone online in uk

prednisone cream rx: prednisone 10mg prices – prednisone medication

https://zithromaxpharm.online/# zithromax 500mg

https://prednisonepharm.store/# prednisone 20 mg prices

prednisone 5 tablets: prednisone 15 mg daily – otc prednisone cream

zithromax for sale 500 mg where can i buy zithromax medicine can you buy zithromax over the counter in australia

https://clomidpharm.shop/# buying clomid without dr prescription

prednisone 200 mg tablets: generic prednisone otc – prednisone pill prices

http://zithromaxpharm.online/# buy azithromycin zithromax

cytotec online: buy cytotec online fast delivery – order cytotec online

https://nolvadex.pro/# femara vs tamoxifen

clomid nolvadex: tamoxifen and depression – common side effects of tamoxifen

http://clomidpharm.shop/# how to buy clomid online

prednisone online australia buy prednisone online from canada prednisone online

canadian drug companies: prescription drugs without the prescription – canadian prescription prices

https://edwithoutdoctorprescription.store/# prescription meds without the prescriptions

Hey, I think your blog might be having browser compatibility issues.

When I look at your blog site in Ie, it looks fine but when opening in Internet Explorer, it has

some overlapping. I just wanted to give you a quick heads up!

Other then that, fantastic blog!

ed meds online without doctor prescription: legal to buy prescription drugs from canada – legal to buy prescription drugs from canada

http://reputablepharmacies.online/# canadian rx pharmacy online

buy prescription drugs online: buy prescription drugs from canada – prescription drugs online without

trust pharmacy canada https://edwithoutdoctorprescription.store/# buy prescription drugs without doctor

canadian pharcharmy online viagra

compare ed drugs: non prescription ed pills – erection pills online

https://reputablepharmacies.online/# top online pharmacies

canada online pharmacies: most trusted canadian online pharmacy – licensed canadian pharmacies

https://edpills.bid/# male ed drugs

mexican pharmacies online: legitimate online pharmacy usa – best canadian pharmacies

http://reputablepharmacies.online/# pharmacy drugstore online

buy prescription drugs from canada cheap: viagra without a doctor prescription – online prescription for ed meds

best medication for ed: how to cure ed – buying ed pills online

buy cheap prescription drugs online: 100mg viagra without a doctor prescription – best non prescription ed pills

http://reputablepharmacies.online/# over the counter drug store

mail order pharmacies: canadian pharmacy order – aarp approved canadian online pharmacies

trusted canadian pharmacy: legit canadian pharmacy – canadadrugpharmacy com canadianpharmacy.pro

https://canadianpharmacy.pro/# online canadian pharmacy canadianpharmacy.pro

http://mexicanpharmacy.win/# pharmacies in mexico that ship to usa mexicanpharmacy.win

buy prescription drugs from india: cheapest online pharmacy india – pharmacy website india indianpharmacy.shop

https://canadianpharmacy.pro/# canadian pharmacy meds reviews canadianpharmacy.pro

best online pharmacies in mexico: Mexico pharmacy – medication from mexico pharmacy mexicanpharmacy.win

https://indianpharmacy.shop/# best india pharmacy indianpharmacy.shop

canada pharmacy online legit: Pharmacies in Canada that ship to the US – legit canadian pharmacy online canadianpharmacy.pro

http://mexicanpharmacy.win/# buying prescription drugs in mexico mexicanpharmacy.win

https://indianpharmacy.shop/# Online medicine order indianpharmacy.shop

http://canadianpharmacy.pro/# northern pharmacy canada canadianpharmacy.pro

north canadian pharmacy

https://indianpharmacy.shop/# Online medicine order

Online medicine home delivery

https://indianpharmacy.shop/# indianpharmacy com indianpharmacy.shop

This page certainly has all of the information and facts

I needed concerning this subject and didn’t know who to ask.

mexico drug stores pharmacies mexican pharmacy online mexican online pharmacies prescription drugs mexicanpharmacy.win

purple pharmacy mexico price list: Mexico pharmacy – mexican pharmaceuticals online

http://mexicanpharmacy.win/# mexico pharmacies prescription drugs mexicanpharmacy.win

http://indianpharmacy.shop/# mail order pharmacy india indianpharmacy.shop

top 10 pharmacies in india

escrow pharmacy canada Pharmacies in Canada that ship to the US canadianpharmacymeds canadianpharmacy.pro

http://indianpharmacy.shop/# Online medicine order indianpharmacy.shop

http://canadianpharmacy.pro/# canadian pharmacy victoza canadianpharmacy.pro

http://mexicanpharmacy.win/# purple pharmacy mexico price list mexicanpharmacy.win

reputable indian pharmacies

canadian pharmacy prices canadian neighbor pharmacy canadian pharmacy no scripts canadianpharmacy.pro

http://canadianpharmacy.pro/# legit canadian pharmacy canadianpharmacy.pro

https://mexicanpharmacy.win/# п»їbest mexican online pharmacies mexicanpharmacy.win

buy prescription drugs from india

my canadian pharmacy Canadian pharmacy online canadian pharmacy review canadianpharmacy.pro

http://mexicanpharmacy.win/# mexico pharmacies prescription drugs mexicanpharmacy.win

https://indianpharmacy.shop/# indian pharmacy online indianpharmacy.shop

reputable indian online pharmacy

northern pharmacy canada Canadian pharmacy online canadianpharmacyworld com canadianpharmacy.pro

https://canadianpharmacy.pro/# online canadian pharmacy reviews canadianpharmacy.pro

https://canadianpharmacy.pro/# canadian pharmacy service canadianpharmacy.pro

Online medicine order

http://canadianpharmacy.pro/# best canadian pharmacy canadianpharmacy.pro

http://mexicanpharmacy.win/# mexican drugstore online mexicanpharmacy.win

buy mexican drugs online

canada cloud pharmacy Pharmacies in Canada that ship to the US my canadian pharmacy review canadianpharmacy.pro

http://indianpharmacy.shop/# indianpharmacy com indianpharmacy.shop

https://canadianpharmacy.pro/# canada drug pharmacy canadianpharmacy.pro

Online medicine order

Pharmacie en ligne livraison 24h pharmacie en ligne sans ordonnance Pharmacie en ligne pas cher

https://levitrasansordonnance.pro/# acheter medicament a l etranger sans ordonnance

http://levitrasansordonnance.pro/# pharmacie ouverte 24/24

Pharmacie en ligne pas cher

Pharmacie en ligne livraison gratuite: cialissansordonnance.shop – Acheter mГ©dicaments sans ordonnance sur internet

Viagra pas cher livraison rapide france: Acheter du Viagra sans ordonnance – Viagra pas cher inde

Viagra femme ou trouver Viagra sans ordonnance 24h Viagra sans ordonnance livraison 24h

http://viagrasansordonnance.pro/# Sildénafil 100mg pharmacie en ligne

pharmacie ouverte 24/24: kamagra oral jelly – Pharmacie en ligne livraison rapide

https://pharmadoc.pro/# acheter medicament a l etranger sans ordonnance

Pharmacie en ligne pas cher: cialissansordonnance.shop – п»їpharmacie en ligne

http://acheterkamagra.pro/# Pharmacies en ligne certifiГ©es

Pharmacie en ligne France

Viagra vente libre pays: viagrasansordonnance.pro – SildГ©nafil 100mg pharmacie en ligne

http://levitrasansordonnance.pro/# Pharmacie en ligne livraison rapide

Pharmacie en ligne livraison 24h: PharmaDoc – п»їpharmacie en ligne

п»їpharmacie en ligne: kamagra livraison 24h – acheter mГ©dicaments Г l’Г©tranger

https://viagrasansordonnance.pro/# Prix du Viagra 100mg en France

п»їpharmacie en ligne Acheter Cialis Pharmacie en ligne fiable

Pharmacie en ligne France: levitra generique prix en pharmacie – pharmacie ouverte 24/24

https://levitrasansordonnance.pro/# Pharmacie en ligne sans ordonnance

Pharmacie en ligne pas cher levitra generique Pharmacie en ligne livraison 24h

https://viagrasansordonnance.pro/# Viagra femme ou trouver

Pharmacie en ligne livraison 24h

Pharmacie en ligne fiable: kamagra pas cher – п»їpharmacie en ligne

Pharmacie en ligne France: kamagra 100mg prix – Pharmacie en ligne pas cher

http://cialissansordonnance.shop/# Pharmacie en ligne sans ordonnance

amoxicillin 875 125 mg tab amoxicillin 500mg without prescription amoxicillin 500 mg tablet price

https://ivermectin.store/# purchase ivermectin

https://prednisonetablets.shop/# prednisone 2.5 tablet

generic zithromax 500mg india: zithromax over the counter canada – can you buy zithromax over the counter in australia

10 mg prednisone tablets: prednisone 5093 – prednisone 20mg cheap

amoxicillin 500mg prescription amoxicillin online purchase buy amoxil

https://azithromycin.bid/# zithromax for sale 500 mg

price of ivermectin: stromectol 3 mg dosage – ivermectin new zealand

https://azithromycin.bid/# zithromax cost

cost of generic clomid price: get generic clomid without prescription – cost cheap clomid price

http://clomiphene.icu/# where buy generic clomid online

http://azithromycin.bid/# generic zithromax india

ivermectin medicine ivermectin 8 mg ivermectin stromectol

ivermectin cream uk: ivermectin ireland – stromectol pill

stromectol 3 mg tablets price: ivermectin 1mg – ivermectin 5 mg

stromectol south africa: stromectol medicine – stromectol ivermectin 3 mg

https://clomiphene.icu/# can i get cheap clomid online

zithromax 500 mg zithromax online pharmacy canada zithromax pill

buying amoxicillin in mexico: amoxicillin 500mg prescription – generic amoxicillin over the counter

https://prednisonetablets.shop/# prednisone 5mg daily

where can i buy amoxocillin: amoxicillin 250 mg capsule – how to get amoxicillin

stromectol sales stromectol uk ivermectin new zealand

generic amoxicillin over the counter: amoxicillin 200 mg tablet – amoxicillin 500mg for sale uk

https://ivermectin.store/# ivermectin purchase

https://ivermectin.store/# ivermectin 1 cream

ivermectin 2% stromectol where to buy cost of ivermectin 1% cream

where to buy amoxicillin pharmacy: where can i buy amoxicillin online – amoxicillin cost australia

https://amoxicillin.bid/# how much is amoxicillin

canada pharmacy 24h Best Canadian online pharmacy canada drugstore pharmacy rx canadianpharm.store

https://mexicanpharm.shop/# reputable mexican pharmacies online mexicanpharm.shop

mail order pharmacy india: order medicine from india to usa – indian pharmacy indianpharm.store

mexican border pharmacies shipping to usa: medicine in mexico pharmacies – п»їbest mexican online pharmacies mexicanpharm.shop

http://mexicanpharm.shop/# buying prescription drugs in mexico mexicanpharm.shop

mexico drug stores pharmacies Certified Pharmacy from Mexico medicine in mexico pharmacies mexicanpharm.shop

http://canadianpharm.store/# canadian drug pharmacy canadianpharm.store

best canadian pharmacy online: Pharmacies in Canada that ship to the US – canadian pharmacy no scripts canadianpharm.store

mexican pharmacy: Certified Pharmacy from Mexico – best online pharmacies in mexico mexicanpharm.shop

mexican pharmaceuticals online Online Pharmacies in Mexico mexico drug stores pharmacies mexicanpharm.shop

http://indianpharm.store/# indian pharmacy paypal indianpharm.store

mexican pharmaceuticals online: Certified Pharmacy from Mexico – best online pharmacies in mexico mexicanpharm.shop

mexican pharmacy: Online Pharmacies in Mexico – mexican pharmaceuticals online mexicanpharm.shop

https://canadianpharm.store/# canadian pharmacy service canadianpharm.store

mexican mail order pharmacies Online Mexican pharmacy mexico drug stores pharmacies mexicanpharm.shop

canadian pharmacy online: Best Canadian online pharmacy – canadian pharmacy 1 internet online drugstore canadianpharm.store

http://canadianpharm.store/# best canadian pharmacy online canadianpharm.store

best online canadian pharmacy: canada drugstore pharmacy rx – canadapharmacyonline canadianpharm.store

https://mexicanpharm.shop/# buying prescription drugs in mexico online mexicanpharm.shop

reputable mexican pharmacies online: pharmacies in mexico that ship to usa – best online pharmacies in mexico mexicanpharm.shop

mexican mail order pharmacies Online Pharmacies in Mexico mexico drug stores pharmacies mexicanpharm.shop

india pharmacy mail order: Indian pharmacy to USA – india online pharmacy indianpharm.store

best canadian pharmacy to order from: Licensed Online Pharmacy – canadian pharmacy drugs online canadianpharm.store

http://mexicanpharm.shop/# mexican pharmaceuticals online mexicanpharm.shop

mexico drug stores pharmacies mexico drug stores pharmacies mexico pharmacy mexicanpharm.shop

indian pharmacy: international medicine delivery from india – online pharmacy india indianpharm.store

http://canadianpharm.store/# legit canadian online pharmacy canadianpharm.store

trusted canadian pharmacy: Licensed Online Pharmacy – canadian pharmacy scam canadianpharm.store

https://canadianpharm.store/# ordering drugs from canada canadianpharm.store

buy medicines online in india order medicine from india to usa online shopping pharmacy india indianpharm.store

Online medicine order: international medicine delivery from india – indianpharmacy com indianpharm.store

http://canadianpharm.store/# trustworthy canadian pharmacy canadianpharm.store

п»їbest mexican online pharmacies: Certified Pharmacy from Mexico – buying from online mexican pharmacy mexicanpharm.shop

http://mexicanpharm.shop/# mexican pharmacy mexicanpharm.shop

best online pharmacy india Indian pharmacy to USA india pharmacy indianpharm.store

canadapharmacyonline: Pharmacies in Canada that ship to the US – canadian drugs canadianpharm.store

Hey! This is kind of off topic but I need some advice from an established blog.

Is it difficult to set up your own blog? I’m not very techincal but I can figure things out

pretty quick. I’m thinking about making my own but I’m not sure

where to begin. Do you have any points or suggestions?

Appreciate it

http://canadianpharm.store/# canadian family pharmacy canadianpharm.store

pharmacies in mexico that ship to usa Online Mexican pharmacy mexico pharmacy mexicanpharm.shop

canadian drugstore online: Best Canadian online pharmacy – certified canadian pharmacy canadianpharm.store

medicine in mexico pharmacies: Certified Pharmacy from Mexico – mexico drug stores pharmacies mexicanpharm.shop

http://indianpharm.store/# best online pharmacy india indianpharm.store

https://mexicanpharm.shop/# mexican border pharmacies shipping to usa mexicanpharm.shop

canadian medications Certified Online Pharmacy Canada canadian pharmacy king canadianpharm.store

reputable indian online pharmacy: best online pharmacy india – indian pharmacy paypal indianpharm.store

http://canadianpharm.store/# medication canadian pharmacy canadianpharm.store

mexican pharmaceuticals online: Online Mexican pharmacy – mexican drugstore online mexicanpharm.shop

mexico drug stores pharmacies Online Pharmacies in Mexico purple pharmacy mexico price list mexicanpharm.shop

I know this web page gives quality depending content

and additional data, is there any other web site which gives these information in quality?

usa online pharmacy pharmacy prices compare northwest canadian pharmacy

list of canadian pharmacies online: canadian drugstore prices – online drugstore service canada

rx prices: most reputable canadian pharmacies – prescription drug pricing

http://canadadrugs.pro/# drugs from canada without prescription

drug stores canada: canadian mail order pharmacies – canadian drug stores

prescription without a doctors prescription canada drug prices prescription drugs canadian

http://canadadrugs.pro/# canadiandrugstore.com

on line pharmacy with no perscriptions: cheapest canadian online pharmacy – canadian prescriptions

mail order canadian drugs: my mexican drugstore – canada pharmacy world

https://canadadrugs.pro/# drugstore online shopping

india online pharmacy: price prescriptions – prescription drugs online without doctor

prescription drugs canadian: north canadian pharmacy – rx online

https://canadadrugs.pro/# canada pharmacy reviews

canadian pharmacy selling viagra best pharmacy recommended canadian online pharmacies

top rated online pharmacy: canadian pharmacies online legitimate – pharmacy drug store

https://canadadrugs.pro/# list of reputable canadian pharmacies

real canadian pharmacy: pain meds online without doctor prescription – canadian rx pharmacy

legal canadian prescription drugs online top rated online canadian pharmacies canadian pharmacies that ship to usa

canadian pharmacy cheap: canadian pharmaceuticals for usa sales – legal online pharmacies

list of online canadian pharmacies: online pharmacy no prescription – pharmacy

https://canadadrugs.pro/# legal canadian pharmacy online

viagra no prescription canadian pharmacy: canadian pharmacy no prescription – prescription drugs online

https://canadadrugs.pro/# prescription without a doctor’s prescription

canadian drugstore online: online meds no rx reliable – canadian online pharmacies prescription drugs

list of aarp approved pharmacies: no prescription canadian drugs – canadian pharmacy usa

https://canadadrugs.pro/# mexico pharmacy order online

best canadian mail order pharmacies: cheapest canadian pharmacy – discount drugs

Thanks for sharing your thoughts about Hair loss supplements

for women and men. Regards

overseas pharmacy: pharmacies withour prescriptions – best online pharmacies without a script

https://canadadrugs.pro/# mail order pharmacy

cheap drug prices: us canadian pharmacy – canadian pharmacy generic

http://canadadrugs.pro/# doxycycline mexican pharmacy

certified canadian online pharmacies: canadian online pharmacies legitimate by aarp – prescription drugs canada

http://canadadrugs.pro/# trust online pharmacy

reliable mexican pharmacies no prescription canadian pharmacy prescription drug pricing

indian pharmacy top online pharmacy india Online medicine home delivery

http://canadianinternationalpharmacy.pro/# certified canadian international pharmacy

canadian pharmacy near me: canadian 24 hour pharmacy – canada drugs online

http://canadianinternationalpharmacy.pro/# safe reliable canadian pharmacy

ed dysfunction treatment: best erectile dysfunction pills – best over the counter ed pills

buying from online mexican pharmacy buying prescription drugs in mexico mexican pharmacy

http://canadianinternationalpharmacy.pro/# canadian pharmacy 24h com safe

prescription drugs without doctor approval: ed pills without doctor prescription – how to get prescription drugs without doctor

top ed pills cure ed cheapest ed pills

best online pharmacies in mexico: mexican mail order pharmacies – medicine in mexico pharmacies

https://canadianinternationalpharmacy.pro/# canadian discount pharmacy

http://edpill.cheap/# ed medications list

http://medicinefromindia.store/# indian pharmacy online

buy prescription drugs online cialis without a doctor prescription canada viagra without doctor prescription

online pharmacy india: india pharmacy mail order – reputable indian online pharmacy

https://edpill.cheap/# ed dysfunction treatment

canadian pharmacy meds reviews: best online canadian pharmacy – vipps canadian pharmacy

https://medicinefromindia.store/# pharmacy website india

п»їlegitimate online pharmacies india: best online pharmacy india – reputable indian online pharmacy

best ed treatment ed pills otc male erection pills

http://canadianinternationalpharmacy.pro/# online canadian drugstore

mail order pharmacy india cheapest online pharmacy india best india pharmacy

https://canadianinternationalpharmacy.pro/# canada drugs online reviews

http://edwithoutdoctorprescription.pro/# prescription drugs without doctor approval

non prescription ed drugs ed pills without doctor prescription viagra without a doctor prescription walmart

viagra without a prescription: generic cialis without a doctor prescription – п»їprescription drugs

https://canadianinternationalpharmacy.pro/# canadian pharmacy mall

buying prescription drugs in mexico online medicine in mexico pharmacies mexican online pharmacies prescription drugs

http://edpill.cheap/# top erection pills

best canadian pharmacy canadian mail order pharmacy the canadian drugstore

https://edwithoutdoctorprescription.pro/# buy prescription drugs

prescription drugs without doctor approval: cialis without a doctor prescription – п»їprescription drugs

canadian pharmacy no scripts legal canadian pharmacy online safe online pharmacies in canada

https://edpill.cheap/# gnc ed pills

buying from online mexican pharmacy mexican pharmacy best online pharmacies in mexico

https://edwithoutdoctorprescription.pro/# best ed pills non prescription

canadian world pharmacy canadian pharmacy 24h com legal to buy prescription drugs from canada

Online medicine order: indianpharmacy com – п»їlegitimate online pharmacies india

http://certifiedpharmacymexico.pro/# buying from online mexican pharmacy

indian pharmacy online buy medicines online in india india pharmacy mail order

http://canadianinternationalpharmacy.pro/# canada ed drugs

http://medicinefromindia.store/# top 10 online pharmacy in india

https://medicinefromindia.store/# world pharmacy india

non prescription ed pills: cure ed – best over the counter ed pills

https://canadianinternationalpharmacy.pro/# canadian pharmacy

https://edwithoutdoctorprescription.pro/# online prescription for ed meds

mexican pharmacy mexican online pharmacies prescription drugs mexican mail order pharmacies

mexico drug stores pharmacies buying from online mexican pharmacy mexico pharmacies prescription drugs

http://mexicanph.shop/# buying prescription drugs in mexico online

reputable mexican pharmacies online

pharmacies in mexico that ship to usa mexican drugstore online п»їbest mexican online pharmacies

buying from online mexican pharmacy buying prescription drugs in mexico medicine in mexico pharmacies

mexico pharmacies prescription drugs mexico drug stores pharmacies mexico pharmacy

buying prescription drugs in mexico online mexican pharmacy п»їbest mexican online pharmacies

mexican mail order pharmacies purple pharmacy mexico price list pharmacies in mexico that ship to usa

mexican mail order pharmacies mexico pharmacies prescription drugs mexican drugstore online

buying prescription drugs in mexico best online pharmacies in mexico buying prescription drugs in mexico

http://mexicanph.shop/# mexican online pharmacies prescription drugs

purple pharmacy mexico price list

mexico drug stores pharmacies mexican rx online buying prescription drugs in mexico online

mexican drugstore online buying prescription drugs in mexico online mexican online pharmacies prescription drugs

mexican drugstore online mexican drugstore online pharmacies in mexico that ship to usa

mexican online pharmacies prescription drugs mexico drug stores pharmacies buying prescription drugs in mexico online

mexican pharmaceuticals online п»їbest mexican online pharmacies reputable mexican pharmacies online

https://mexicanph.com/# best online pharmacies in mexico

buying from online mexican pharmacy

buying prescription drugs in mexico online mexico pharmacies prescription drugs buying from online mexican pharmacy

п»їbest mexican online pharmacies mexican pharmaceuticals online medication from mexico pharmacy

mexico pharmacy mexican online pharmacies prescription drugs mexico pharmacy

mexican online pharmacies prescription drugs mexican drugstore online mexican mail order pharmacies

best online pharmacies in mexico mexico drug stores pharmacies mexico pharmacies prescription drugs

medication from mexico pharmacy reputable mexican pharmacies online purple pharmacy mexico price list

buying prescription drugs in mexico mexican drugstore online mexico pharmacy

best online pharmacies in mexico best online pharmacies in mexico medication from mexico pharmacy

buying prescription drugs in mexico mexican mail order pharmacies mexican pharmacy

mexico pharmacy mexican online pharmacies prescription drugs mexican drugstore online

mexican pharmacy mexico drug stores pharmacies mexico pharmacy

medication from mexico pharmacy mexican pharmacy best online pharmacies in mexico

buying prescription drugs in mexico п»їbest mexican online pharmacies buying prescription drugs in mexico

mexico pharmacy buying prescription drugs in mexico mexican rx online

purple pharmacy mexico price list buying prescription drugs in mexico online mexican drugstore online

mexican mail order pharmacies mexican pharmacy mexican rx online

medicine in mexico pharmacies medication from mexico pharmacy buying prescription drugs in mexico online

buying prescription drugs in mexico best online pharmacies in mexico pharmacies in mexico that ship to usa

mexico drug stores pharmacies mexico pharmacies prescription drugs purple pharmacy mexico price list

mexico drug stores pharmacies buying prescription drugs in mexico online mexican pharmacy

mexican pharmacy medication from mexico pharmacy mexican rx online

reputable mexican pharmacies online mexico drug stores pharmacies buying prescription drugs in mexico online

mexico drug stores pharmacies mexican border pharmacies shipping to usa medication from mexico pharmacy

buying from online mexican pharmacy mexico pharmacy mexican drugstore online

buying from online mexican pharmacy mexico pharmacy reputable mexican pharmacies online

pharmacies in mexico that ship to usa mexico drug stores pharmacies mexico pharmacy

п»їbest mexican online pharmacies reputable mexican pharmacies online mexico drug stores pharmacies

mexican mail order pharmacies mexican rx online best online pharmacies in mexico

buying prescription drugs in mexico mexico drug stores pharmacies mexican pharmacy

mexico pharmacy п»їbest mexican online pharmacies purple pharmacy mexico price list

stromectol in canada price of ivermectin ivermectin lice oral

ivermectin 6: ivermectin cream 1 – stromectol tablets for humans for sale

http://buyprednisone.store/# where to get prednisone

lasix furosemide Over The Counter Lasix furosemide 100mg

875 mg amoxicillin cost: over the counter amoxicillin canada – price for amoxicillin 875 mg

buy prednisone from india: prednisone brand name canada – buy prednisone 10mg

ivermectin pills human stromectol ivermectin buy ivermectin 3 mg tabs

lisinopril online uk: lisinopril hct – lisinopril 5mg pill

https://stromectol.fun/# stromectol uk buy

lisinopril 60 mg daily lisinopril buy online zestoretic 25

lisinopril 20mg buy: lisinopril 5 mg buy online – lisinopril 10mg online

cheapest price for lisinopril prices for lisinopril zestril

buy amoxicillin online without prescription: where to buy amoxicillin pharmacy – can i buy amoxicillin online

ivermectin cream canada cost: ivermectin cream 5% – order stromectol online

lisinopril 20 25 mg rx 535 lisinopril 40 mg cost of lisinopril 20 mg

lasix medication: Buy Lasix – lasix uses

amoxil pharmacy: amoxicillin 1000 mg capsule – amoxicillin 200 mg tablet

lisinopril 5 mg daily: where can i purchase lisinopril – lisinopril medication generic

prednisone 4mg medicine prednisone 10mg prednisone without a prescription

price of amoxicillin without insurance: how much is amoxicillin prescription – can you purchase amoxicillin online

furosemida Buy Furosemide lasix 100 mg

http://amoxil.cheap/# amoxicillin tablet 500mg

buy prednisone 20mg without a prescription best price: over the counter prednisone cheap – where to buy prednisone 20mg

lasix 40mg lasix furosemide 40 mg lasix 40mg

cost of lisinopril 2.5 mg: lisinopril 12.5 – lisinopril 20 mg sale

lisinopril otc: zestril 10 mg online – buy lisinopril in mexico

furosemide 100mg Buy Furosemide lasix generic

how to buy stromectol: ivermectin 6 mg tablets – stromectol 3mg tablets

furosemide 40 mg Buy Furosemide lasix 40 mg

can i buy lisinopril in mexico: lisinopril 4 mg – lisinopril 10mg online

furosemide 100mg: Buy Lasix – generic lasix

ivermectin drug stromectol liquid ivermectin cream 5%

lasix dosage: Buy Furosemide – lasix uses

lisinopril 25 mg lisinopril 20 mg buy cost of lisinopril in canada

furosemide 40 mg: Over The Counter Lasix – lasix generic

http://lisinopril.top/# lisinopril generic brand

ivermectin lotion 0.5: ivermectin generic name – ivermectin 0.5

order prednisone 10mg prednisone 15 mg daily how can i get prednisone online without a prescription

cost of amoxicillin prescription: amoxicillin 500 mg online – buy amoxicillin 500mg canada

india online pharmacy indian pharmacies safe world pharmacy india

Hi there, I read your new stuff on a regular basis. Your

humoristic style is awesome, keep up the good work!

https://indianph.com/# top 10 online pharmacy in india

india online pharmacy

Online medicine home delivery best india pharmacy reputable indian online pharmacy

http://indianph.xyz/# buy prescription drugs from india

world pharmacy india

mail order pharmacy india online shopping pharmacy india reputable indian pharmacies

http://indianph.com/# buy prescription drugs from india

pharmacy website india

best india pharmacy best india pharmacy reputable indian pharmacies

http://cipro.guru/# antibiotics cipro

buy cipro: cipro – ciprofloxacin generic

https://nolvadex.guru/# is nolvadex legal

arimidex vs tamoxifen bodybuilding tamoxifen for gynecomastia reviews tamoxifen vs clomid

cheap doxycycline online: buy doxycycline 100mg – doxycycline

order cytotec online buy cytotec online fast delivery cytotec buy online usa

cytotec abortion pill: order cytotec online – buy cytotec over the counter

cipro ciprofloxacin: ciprofloxacin – cipro pharmacy

buy cipro online ciprofloxacin generic price antibiotics cipro

doxycycline monohydrate: doxycycline 50mg – doxycycline

diflucan india ordering diflucan generic diflucan price canada

п»їcipro generic buy cipro online buy cipro online without prescription

http://doxycycline.auction/# buy cheap doxycycline

ciprofloxacin over the counter where can i buy cipro online п»їcipro generic

sweeti fox: Sweetie Fox filmleri – Sweetie Fox

happens

https://abelladanger.online/# abella danger izle

http://evaelfie.pro/# eva elfie izle

swetie fox: Sweetie Fox modeli – swetie fox

https://abelladanger.online/# abella danger filmleri

lana rhoades: lana rhoades filmleri – lana rhoades izle

https://angelawhite.pro/# Angela White filmleri

eva elfie: eva elfie – eva elfie modeli

http://sweetiefox.online/# sweety fox

?????? ????: abella danger izle – abella danger video

https://sweetiefox.online/# sweeti fox

lana rhoades: lana rhoades modeli – lana rhoades izle

http://evaelfie.pro/# eva elfie modeli

http://sweetiefox.online/# Sweetie Fox izle

eva elfie filmleri: eva elfie filmleri – eva elfie filmleri

http://abelladanger.online/# Abella Danger

swetie fox: sweeti fox – Sweetie Fox

https://sweetiefox.online/# Sweetie Fox

sweety fox: sweeti fox – Sweetie Fox izle

https://evaelfie.pro/# eva elfie

lana rhoades video: lana rhoades – lana rhoades izle

http://lanarhoades.fun/# lana rhoades modeli

Angela Beyaz modeli: Angela White video – Angela White

lana rhoades filmleri: lana rhoades – lana rhoades modeli

https://sweetiefox.online/# Sweetie Fox

Angela White filmleri: Angela White filmleri – Angela White filmleri

http://lanarhoades.pro/# lana rhoades boyfriend

http://sweetiefox.pro/# sweetie fox new

game online woman: http://sweetiefox.pro/# sweetie fox video

http://lanarhoades.pro/# lana rhoades solo

http://lanarhoades.pro/# lana rhoades boyfriend

http://miamalkova.life/# mia malkova photos

Thank you for sharing indeed great looking !

dating meet singles: https://evaelfie.site/# eva elfie new videos

http://lanarhoades.pro/# lana rhoades

http://miamalkova.life/# mia malkova hd

eva elfie new video: eva elfie new video – eva elfie hot

singles dating site: http://evaelfie.site/# eva elfie hot

https://sweetiefox.pro/# ph sweetie fox

eva elfie hot: eva elfie – eva elfie videos

woman dating sites: https://sweetiefox.pro/# sweetie fox full video

http://evaelfie.site/# eva elfie hot

eva elfie new video: eva elfie full video – eva elfie new videos

http://sweetiefox.pro/# fox sweetie

http://miamalkova.life/# mia malkova movie

sweetie fox full video: sweetie fox full – sweetie fox full video

https://aviatoroyunu.pro/# aviator oyunu

aviator oyna slot: aviator – aviator oyna slot

http://jogodeaposta.fun/# aplicativo de aposta

https://pinupcassino.pro/# pin up casino

aplicativo de aposta: jogo de aposta – aviator jogo de aposta

https://aviatormalawi.online/# aviator

https://aviatoroyunu.pro/# aviator oyunu

aviator oyunu: aviator hilesi – aviator oyna slot

aviator: aviator malawi – play aviator

pin up aviator: jogar aviator online – aviator jogar

aviator online: aviator online – aviator bet

zithromax capsules: zithromax rash zithromax online no prescription

aviator bet: aviator bet – aviator betano

zithromax 500 tablet – https://azithromycin.pro/zithromax-with-alcohol.html where can i buy zithromax uk

aviator bet: aviator login – aviator ghana

canadian pharmacy king reviews: Cheapest drug prices Canada – canadian pharmacy drugs online canadianpharm.store

best india pharmacy online pharmacy usa pharmacy website india indianpharm.store

canada drugstore pharmacy rx: canadian pharmacy – adderall canadian pharmacy canadianpharm.store

canadian drug pharmacy: Best Canadian online pharmacy – online pharmacy canada canadianpharm.store

Wow, wonderful weblog layout! How long have you been blogging for?

you make running a blog look easy. The full look

of your web site is excellent, let alone the content!

You can see similar here sklep