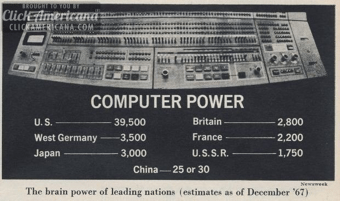

During the early computer era of the 1960s, it was thought there would only be the need for a few dozen computers. By the 1970s, there were just over 50,000 computers in the world.

Computers have grown in power by orders of magnitude since. They have become more intelligent in the way they interact with humans, starting with switches and buttons then punch cards and on to the keyboard. Along the way, we added joysticks, the mouse, track pads and the touch screen.

Newspaper image projecting the number of computers in the world in 1967

As each paradigm replaced the last, productivity and utility. In some cases, we cling on to the prior generation’s system to such a degree we think the replacement is little more than a novelty or a toy. For example, when the punch card was the fundamental input system to computers, many computer engineers thought a direct keyboard connection to a computer was “redundant” and pointless because punch cards could move through the chute guides 10 times faster than the best typists at the time [1]. The issue is we see the future through the eyes of the prior paradigms.

Punch card stack of customer data

We Had To Become More Like The Computer Than The Computer had to Become More Like Us

All prior computer interaction systems have one central point in common. They force humans to be more like the computer by forcing the operator to think through arcane commands and procedures. We take it for granted and forget the ground rules we all had to learn and continue to learn to use our computers and devices. I equate this to learning any arcane language that requires new vocabularies as new operating systems are released and new or updated applications are available.

Is typing and gesturing the most efficient way to interact with a computer or device for the vastly most common uses most people have? All of the cognitive (mental energy) and mechanical (physical energy) loads we must induce for even trivial daily routines is disproportionately high for what can be distilled to a yes or no answer. When seen for what it truly is, these old ways are inefficient and ineffective for many of the common things we do with devices and computers.

IBM Keypunch station to encode Punch Cards

What if we didn’t need to learn arcane commands? What if you could use the most effective and powerful communication tool ever invented? This tool evolved over millions of years and allows you to express complex ideas in very compact and data dense ways yet can be nuanced to the width of a hair [2]. What is this tool? It is our voice.

The fundamental reason humans have been reduced to tapping on keyboards made of glass is simply because the computer was not powerful enough to understand our words let alone even begin to decode our intent.

It’s been a long road to get computers to understand humans. It started in the summer of 1952 at Bell Laboratories with Audrey (Automatic Digit Recognizer), the first speaker independent voice recognition system that decoded the phone number digits spoken over a telephone for automated operator assisted calls [3].

At the Seattle World’s Fair in 1962, IBM demonstrated its “Shoebox“ machine. It could understand 16 English words and was designed to be primarily a voice calculator. In the ensuing years there were hundreds of advancements [3].

IBM Shoebox voice recognition calculator

Most of the history of speech recognition was mired in speaker dependent systems that required the user to read a very long story or grouping of words. Even with this training, accuracy was quite poor. There were many reasons for this, much of it was based on the power of the software algorithms and processor power. But in the last 10 years, there have been more advancement than in the last 50. Additionally, continuous speech recognition, where you just talk naturally, has only been refined in the last 5 years.

The Rise Of The Voice First World

Voice based interactions have three advantages over current systems:

Voice is an ambient medium rather than an intentional one (typing, clicking, etc). Visual activity requires singular focused attention (a cognitive load) while speech allows us to do something else.

Voice is descriptive rather than referential. When we speak, we describe objects in terms of their roles and attributes. Most of our interactions with computers are referential.

Voice requires more modest physical resources. Voice based interaction can be scaled down to much smaller and much cheaper form-factors than visual or manual modalities.

The power of voice-based systems has grown powerful with the addition of always-on systems combined with machine learning (Artificial Intelligence), cloud-based computing power and highly optimized algorithms.

Modern speech recognition systems have combined with almost pristine Text-to-Speech voices that so closely resemble human speech, many trained dogs will take commands from the best systems. Viv, Apple’s Siri, Google Voice, Microsoft’s Cortana, Amazon’s Echo/Alexa, Facebook’s M and a few others are the best consumer examples of the combination of Speech Recognition and Text-to-Speech products today. This concept is central to a thesis I have been working on for over 30 years. I call it “Voice First” and it is part of an 800+ page manifesto I have built based around this concept. The Amazon Echo is the first clear Voice First device.

The Voice First paradigm will allow us to eliminate many, if not all, of these steps with just a simple question. This process can be broken out to 3 basic conceptual modes of voice interface operations:

Does Things For You – Task completion:

– Multiple Criteria Vertical and Horizontal searches

– On the fly combining of multiple information sources

– Real-time editing of information based on dynamic criteria

– Integrated endpoints, like ticket purchases, etc.

Understands What You Say – Conversational intent:

– Location context

– Time context

– Task context

– Dialog context

Understands To Know You – Learns and acts on personal information:

– Who are your friends

– Where do you live

– What is your age

– What do you like

In the cloud, there is quite a bit of heavy lifting working at producing an acceptable result. This encompasses:

- Location Awareness

- Time Awareness

- Task Awareness

- Semantic Data

- Out Bound Cloud API Connections

- Task And Domain Models

- Conversational Interface

- Text To Intent

- Speech To Text

- Text To Speech

- Dialog Flow

- Access To Personal Information And Demographics

- Social Graph

- Social Data

The current generation of voice-based computers have limits on what can be accomplished because you and I have become accustomed to doing all of the mechanical work of typing, viewing, distilling, discerning and understanding. When one truly analyzes the exact results we are looking for, most can be answered by a “Yes” or “No”. When the back-end systems correctly analyze your volition and intent, countless steps of mechanical and cognitive load is eliminated. We have recently entered into an epoch where all elements converged to make the full promise of an advanced voice interface truly arrive. W. Edwards Deming [4] quantified the many steps humans need to complete to achieve any task, This was made popular by his thesis of The Shewhart Cycles and PDSA (Plan-Do-Study-Act) Cycles we have become trained to follow when using any computer or software.

The Shorter Path: “Alexa, whats’s my commute look like?”

A Voice First system would operate on the question and calculate the route from the current location to the location that may be implied by time of day and typical destination.

An app system would require you to open your device, select the appropriate app, perhaps a map, localize your current location, pinch and zoom to the destination, scan the colors or icons that represent traffic and then estimate an acceptable insight based on taking in all the information viably present and determine the arrival time.

The implicit and explicit: From Siri And Alexa To Viv

Voice First systems fundamentally change the process by decoding volition and intent using self learning artificial intelligence. The first example of this technology was with Siri [5]. Prior to Siri, systems like Nuance [6] were limited to listening to audio and creating text. Nuance’s technology has roots in optical character recognition and table arrays. The core technology of Siri was not focused just on speech recognition but rather focused primarily on three functions that complement speech recognition:

- Understanding the intent (meaning) of spoken words and generating a dialog with the user in a way that maintains context over time, similar to how people have a dialog

- Once Siri understands what the user is asking for, it reasons how to delegate requests to a dynamic community of web services, prioritizing and blending results and actions from all of them

- Siri learns over time (new words, new partner services, new domains, new user preferences, etc.)

Siri was the result of over 40 years of research funded by DARPA. Siri Inc. was a spin off of SRI Intentional and was a standalone app before Apple acquired the company in 2011 [5].

Dag Kittlaus and Adam Cheyer were cofounders of Siri Inc. and originally planned to stay on and guide their vision on a Voice First system that motivated Steve Jobs to personally start negotiations with Siri Inc.

Siri has not lived up to the grand vision originally imagined by Steve. Although improving, there has yet to be a full API and skills performed by Siri have not yet matched the abilities of the original Siri app.

This set the stage for Amazon and the Echo product [7]. Amazon surprised just about everyone in technology when it was announced on November 6, 2014. This was an outgrowth of a Kindle e-book reader project that began in 2010 and the acquisition of voice platforms it acquired from Yap, Evi, and IVONA.

Amazon Echo circa 2015

The original premise of Echo was to be a portable book reader built around 7 powerful omni-directional microphones and a surprisingly good WiFi/Bluetooth speaker (with separate woofer and tweeter). This humble mission soon morphed into a far more robust solution that is just now taking form for most people.

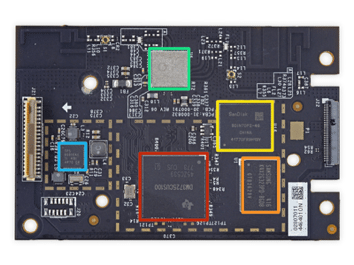

Beyond the the power of the Echo hardware is the power of Amazon Web Services (AWS) [8]. AWS is one of the largest virtual computer platforms in the world. Echo simply would not work without this platform. The electronics in Echo are not powerful enough to parse and respond to voice commands without the millions of processors AWS has at its disposal. In fact, the digital electronics in Echo are centered around 5 chips and perform little more than recording a live stream to AWS servers and sending the resulting audio stream back to be played through the analog electronics and speakers on Echo.

Amazon Echo digital electronics board

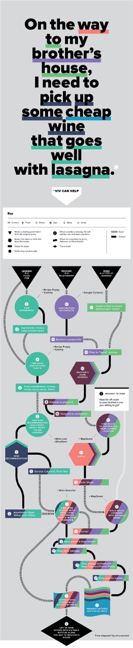

Today with a run away hit on their hands, Amazon recently opened up the system for developers with the ASK program [10]. Amazon also has APIs that connect to an array of home automation systems. Yet simple things like building a shopping list and converting it to an order on Amazon is nearly impossible to do.

Sample of Alexa ASK skills flowchart

Echo is a step forward from the current incarnation of Siri, not so much for the sophistication of the technology or the open APIs, but for the single purpose dedicated Voice First design. This can be experienced after a few weeks of regular use. The always on, always ready low latency response creates a personality and a sense of reliance as you enter the space.

Voice First devices will span the simple to the complex to the reasonably sized to a form factor not much larger than a Lima beam.

Hypothetical Voice First device with all electronics in ear including microphone, speaker, computer, WiFi/Bluetooth and battery

The founders of Siri spent a few years thinking about the direction of Voice First software after they left Apple. The results of this thinking will begin with the first public demonstrate of Viv this spring. Viv [11] is the next generation from Dag and Adam picking up where Siri left off. Viv was developed at SixFive Labs, Inc (the stealth name of the company) with the inside “easter egg” element that the roman numerals are VI V gave a connection to the Viv name.

Viv is orders of magnitude more sophisticated in the way it will act on the dialog you create with it. The more advanced models of Ontological Recipes and self learning will make interactions with Viv more natural and intelligent. This is based upon a new paradigm the Viv team has created called “Exponential Programming”. They have filed many patents central to this concept. As Viv is used by thousand to millions of users, asking perhaps thousands of questions per second, the learning will grow exponentially in short order. Siri and the current voice platforms currently can’t do anything that coders haven’t explicitly programmed it for. Viv solves this problem with the “Dynamically Evolving Systems” architecture that operates directly on the nouns, pronouns, adjectives and verbs.

Viv flow chart example first appearing in Wired Magazine in 2015

Viv is an order of magnitude more useful than currently fashionable Chat Bots. Bots will co-exist as a subset to the more advanced and robust interactive paradigms. Many users will first be exposed to Chat Bots through the anticipated release of a complete Bot platform and Bot store by Facebook.

Viv’s power comes from how it models the lexicon of each sentence with each word in the dialog and acts on it in parallel to produce a response almost instantaneous. These responses will come in the form of a chained dialog that will allow for branching based on your answers.

Viv is built around three principles or “pillars”:

It will be taught by the world

it will know more than it is taught

it will learn something every day.

The experience with Viv will be far more fluid and interactive than any system publicly available. The results will be a system that will ultimately predict your needs and allow you to almost communicate in the shorthand dialogs found common in close relationships.

In The Voice First World, Advertising And Payments Will Not Exist As They Do Today

In the Voice First world many things change. Advertising and payments will particularly be changed and, in themselves, become new paradigms for both merchants and consumers. Advertising as we know it will not exist primarily because we would not tolerate commercial intrusions and interruptions in our dialogs. It would be equivalent to having a friend break into an advertisement about a new gasoline.

Payments will change in profound ways. Many consumer dialogs will have implicit and explicit layered Voice Payments with multiple payment type. Voice First systems will mediate and manage these situations based on a host of factors. Payments companies are not currently prepared for this tectonic shift. In fact, some notable companies are going in the opposite direction. The companies that prevail will have identified the Ontological Recipe technology to connect merchants to customers.

This new advertising and payments paradigm actually form a convergence. Voice Commerce will become the primary replacement for advertising and Voice Payments are the foundation to Voice Commerce. Ontologies [12] and taxonomies [13] will play an important part of Voice Payments. The shift will impact what we today call online, in-app and retail purchases. The least thought through of the changes is the impact on face to face retail when the consumer and the merchant interact with Voice First devices.

Of course Visa, MasterCard and American Express will play an important part of this future and all the payment companies between them and the merchant will need to rapidly change or truly be disrupted. The rate of change will be more massive and pervasive than anything that has come before.

This new advertising and payments paradigm will impact every element of how we interact with Voice First devices. Without human mediated searches on Google, there is no pay-per click. Without a scan of the headlines at your favorite news site, there is no banner advertising.

The Intelligent Agents

A major part of the Voice First paradigm is a modern Intelligent Agent (also known as Intelligent Assistant). Over time, all of us will have many, perhaps dozens, interacting with each other and acting on our behalf. These Intelligent Agents will be the “ghost in the machine” in Voice First devices. They will be dispatched independently of the fundamental software and form a secondary layer that can fluidly connect between a spectrum of services and systems.

Voice First Enhances The Keyboard And Display

Voice First devices will not eliminate display screens as they will still need to be present. However, they will be ephemeral and situational. The Voice Commerce system will present images, video and information for you to consider or evaluate on any available screen. Much like AirPlay but with locational intelligence.

There is also no doubt keyboards and touch screens will still exist. We will just use them less. Still, I predict in the next ten years, your voice is not going to navigate your device, it is going to replace your device in most cases.

The release of Viv will influence the Voice First revolution. Software and hardware will start arriving at an accelerated rate and existing companies will be challenged by startups toiling away in garages and on kitchen tables around the world. If Apple were so inclined, with about a month’s work and a simple WiFi/Bluetooth Speaker with a multi-axis microphone extension, the current Apple TV [14] could offer a wonderful Voice First platform. In fact, I have been experimenting with this combination with great success on a food ordering system. I have also been experimenting on the Amazon Echo platform and built over 45 projects, one of which is a commercial grade hotel room service application complete with food ordering and a virtual store and mini bar along with thermostat setting and light controls for a boutique luxury hotel chain.

I am in a unique position to see the Voice First road ahead because of my years as a voice researcher and payments expert. As a coder and data scientist for the last few months, I have built 100s of simple working demos in the top portion of the Voice First use cases.

A Company You Never Heard Of May Become The Apple Of Voice First

The future of Voice First is not really an Apple vs. Amazon vs. Viv situation. The market is huge and encompasses the entire computer industry. In fact, I assert many existing hardware and software companies will have a Voice First system in the next 24 months. In addition to companies like Amazon and Viv, the first wave already have a strong cloud and AI development background. However, the next wave, like much of the Voice First shift, will likely come from companies that have not yet started or are in the early stages today.

With the Apple TV, Apple has an obvious platform for the next Voice First system. The current system is significantly hindered as it does not typically talk back in the current TV-centered use case. There is also the Apple Watch with a similar impediment of its limitation in the voice interface — it doesn’t have any ability to talk back. I can see a next version with a useable speaker but centered around a Bluetooth headset for voice playback.

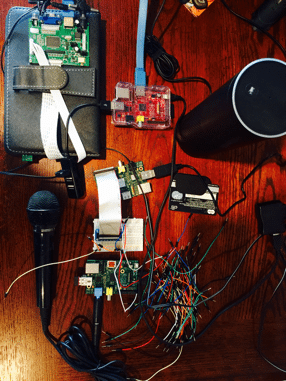

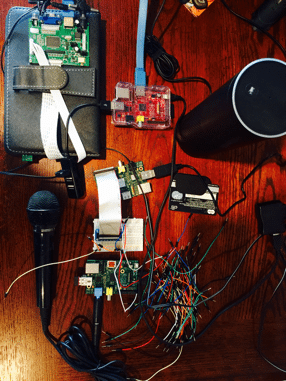

Amazon has a robust head start and has already activated the drive and creativity of the developer community. This inertia will continue and spread across non-Amazon devices. This has already started with Alexa on Raspberry PI [15], the $35 devices originally designed for students to learn to code but have now become the center of many products. Alexa is truly a Voice First system not tied to any hardware. I have developed many applications and hardware solutions using less than $30 worth of hardware on the Raspberry PI Zero platform.

One of My Raspberry PI Alexa + Echo experimentations with Voice Payments

Viv will, at the onset, be a software only product. Initially it will be accessed via apps that have licensed the technology. I also predict a deep linking into some operating systems. Ultimately if not acquired, Viv is likely to create reference grade devices that may rapidly gain popularity.

The first wave of Voice First devices will likely come from these companies with consumer grade and enterprise grade systems and devices:

- Apple

- Microsoft

- IBM

- Oracle

- Salesforce

- Samsung

- Sony

Emotional Interfaces

Voice is not the only element of the human experience making a comeback. In my 800 page voice manifesto I assert that emotional intent facial recognition, along with hand and body gestures, will become a critical addition to the integration of the Voice First future. Although just like voice, facial expression decoding sounds like a novelty. Our voices, combined with a decoding in real-time of the 43 muscles that form an array of expressions, can communicate not only deeper meaning but deeper understanding of volition and intent.

Microsoft Emotion API showing facial scoring

Microsoft’s Cognitive Services has a number of APIs centered around facial recognition. In particular, the Emotion API [15] is most useful. It is currently limited to a pallet of 8 basic emotions with a scoring system that allows weighting in each category in real-time. I have seen far more advanced systems in development that will track nuanced micro-movements to better understand emotional intent.

The Disappearing Computer And Device

Some argue voice systems will become an appendage to our current devices. These systems are currently present on many devices but they have failed to captivate on a mass scale. Amazon’s Echo captivates because of the dynamics present when Voice First defines a physical space. The room feels occupied by an intelligent presence. It is certain that existing devices will evolve. But it is also certain Voice First will enhance these devices and, in many cases, replace them.

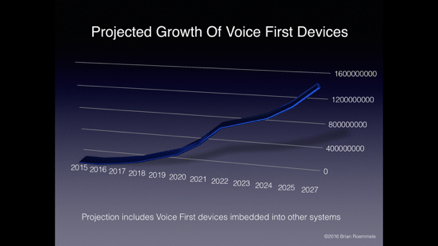

The growth of Voice First devices in 10 years will rival the growth of tablet sales

What becomes of the device, visual operating system, the app when there is little or no need to touch it? You can see just how disrupted the future is for just about every element of technology and business.

The Accelerating Rate Of Change

We have all rapidly acclimated to the accelerating rate of change this epoch has presented as exampled in the monumental shift from mechanical keyboards of cell phones, as typified by the Blackberry device of 2007 at the apex of its popularity and then shift to typing on a glass touch screen brought about with the iPhone release. All quarters, from the technological sophisticates to the common business user to the typical consumer, said they would never give up the world of the mechanical keyboard [16]. Yet, they did. By 2012, the shift had become so cemented in the direction of glass touch screens and simulated keyboards, no one was going backwards. In ten years, few will remember the tremendous amount of cognitive and mechanical steps we went through just to glean simple answers.

Typical iPhone vs. Blackberry comparisons in 2008

One Big Computer As One Big Brain

In many ways we have come full circle to the predictions made in the 1960s that there will be no need for more than a few dozen computers in the world. The huge AI self-learning systems like Viv will use the “hive mind” of the crowd. Some predict it will render our local computers little more than “dumb pipes” with a conduit to one or a few very smart computers in the cloud. We will all face the positive and the negative aspects this will bring.

In 2016, we are at the precipice of something grand and historic. Each improvement in the way we interact with computers brought about long term effects nearly impossible to calculate. Each improvement of computer interaction lowered the bar for access to a larger group. Each improvement in the way we interact with computers stripped away the priesthoods, from the 1960s computer scientists on through to today’s data science engineers. Each improvement democratized access to vast storehouses of information and potentially knowledge.

The last 60 years of computing humans were adapting to the computer. The next 60 years the computer will adapt to us. It will be our voices that will lead the way; it will be a revolution.

______

[1] http://www.columbia.edu/cu/computinghistory/fisk.pdf

[2] https://en.wikipedia.org/wiki/Origin_of_speech

[3] http://en.citizendium.org/wiki/Speech_Recognition

[4] https://en.wikipedia.org/wiki/W._Edwards_Deming

[5] http://qr.ae/RUWZH7

[6] https://en.wikipedia.org/wiki/Nuance_Communications

[7] http://qr.ae/RUWSGC

[8] https://en.wikipedia.org/wiki/Amazon_Web_Services

[9] https://en.wikipedia.org/wiki/Amazon.com#Amazon_Prime

[10] https://developer.amazon.com/appsandservices/solutions/alexa/alexa-skills-kit

[11] http://viv.ai

[12] https://en.wikipedia.org/wiki/Ontology_%28information_science%29

[13] https://en.wikipedia.org/wiki/Taxonomy_(general)

[14] https://en.wikipedia.org/wiki/Apple_TV

[15] https://www.microsoft.com/cognitive-services/en-us/emotion-api

[16] http://crackberry.com/top-10-reasons-why-iphone-still-no-blackberry

Why not make Alexa hands free? Voice first devices seem problematic.

Hi Vadim, great point. Alexa and the Voice First devices I predict will be hands-free and always on.

A voice based interface would be useful in some situations, but worse than useless in others.

In an office environment, you don’t want to distract other people trying to work by saying “open the spreadsheet, go to column 80, change 4 to 8, recalculate.” Unless the office environment abandons cubicles in favour of offices with doors that close (yeah, right), dictation based UI is not going to go over well.

In public, you don’t want to let anyone nearby who stops to listen to hear you dictating your private messages to the machine. Actually, you don’t want your friends or family members to hear you dictating your IMs, either. So make that “anywhere you aren’t completely alone, you don’t want this.”

There’s also the problem that talking is a more wooly and vague form of communication than typing. Because we can see the words we have written, it helps guide and channel the flow of thoughts, whereas talking is a lot more free wheeling and less cogent. I can say from experience that dictating an email that reads in a clear and professional manner is quite a bit more difficult than just typing the damn thing.

Finally, speech first or speech only is a foolish goal because there are so many things we ask computers to do that are hard to put into words, but easy to put into gestures — it’s a lot easier to mouse or tap to indicate what part of the image needs to be zoomed in on than to say “zoom in on the lower two thirds left hand quadrant, no more to the left,” and so on.

I understand your point. The part that may be overlooked is the AI. You will not really be replacing typing with dictation. The AI will parse what you are doing via Intelligent Agents, that already exist today and formulate a response. The example of typing a letter would be the similar path that is part of all high end CRM systems where the context of the message is analyzed and a grouping of responses are presented for response. Some are so well done that almost no human interaction is required and the messages are far more responsive and personalized then a distracted and perhaps uninterested worker would compose. This is already here adding voice to make it more efficient is the big part.

As for navigation. We navigate to get at information to draw a conclusion. Ask yourself in a vast majority of cases is the conclusion really just a binary yes or no? A spread sheet example you present, you ask “Did we lose money in August?” “What sales groups are doing best?’ etc. This is not voice just replacing a manual process it is voice directing AI.

In the case of navigation of information on the screen, the context would be relevant. The system would understand the context and anticipate what should be branched from each situation.

As for an office environment, people are known to talk on a phone in these environments and have constant conversations with fellow workers. This is 99% of office environments. There is little difference talking to a very intelligent and anticipating Voice First system.

Finally screens do not disappear, even manual entry does not disappear. Air gestures would guide some of the responses as screens will be present to review information that needs to be displayed.

In the end what changes is AI makes all things more anticipating removing humans from manual processes using a compact and efficient millions of year old interface, the human voice.

My parents are getting on in age, to the point were eyesight and touch are failing. I figured Google Voice would be nice for them: no more typing, dialing, looking for icons.. it works fine for me.

Total failure., 3 main reasons

1- it gets confused by other voices. Even a male voice on the TV at the other end of the room messes up its recog of my mom’s voice.

2- Real People ™ stutter, mumble, hesitate, interject. “Google, when is the next HEY, GET SOME MILK TOO PLEASE, hum, next showing of Boyhood ?”

3- it needs online

“There will be no need for more than a few dozen computers in the world.”

The statement will only be true if you claim the phones everybody uses – with their ever-increasing power – are not computers. Brian Roemmele, the author of an “800 page voice manifesto” should try saying that to Tim Cook.

That statement was one made in history predicting how many PCs would be the world. Brian was not saying that he was referencing a quote from history.

Sounds like Skynet is getting closer.

Fascinating article. Thank you.

It seems to me as that ‘always on,’ ‘always listening’ technology streaming my life’s details into the web is incredibly invasive. I am not, and I hope the world is not, ready to hand all personal information to some hive computer in the sky.

And voice-based payments… What could possibly go wrong with that? How many thousands of ways could that be hacked?

Technology for technology’s sake is a foolish idea.

There are many great things that may come from advancements in voice recognition. But to project that all device-based interactions will someday have a cloud component is insane.

This is a solution to a non-existent problem that creates more problems than it solves.

I hear ya. Turns out this is exactly what Amazon Echo does. It is also one of the reasons it became an astounding success over the last year. Indeed I have many doubts about what happens with the information that become exformation. Thus far Amazon has a number of tools to show you what was heard and to have it removed from the short term cache. Voice payments like any modern payment system will have a number of rather robust safeguards. Some will be obvious and some will be less obvious. As it stands today, there are 3 stealth startups that are working on voice print identity with some variations. Amazon has tested voice print identity and has a discrimination of 99%. Also can detect if you are actually in the room or a recording is being played back. No for of payments is 100% secure, even Blockchain to some degree.

The cloud component is the only way currently that all the modern voice recognition devices operate. For example Echo has no local voice synthesizer nor does it decode the speech. From this stand point it is little more than a conduit for the club computer that creates an audio file on the file to respond to the audio file assembled locally and parsed in the cloud. Moore’s law will meet this at some point but that will be in the later part of 10 years.

I think this whole program of trying to make computers think more like humans is ill-conceived and headed totally in the wrong direction.

The tedium and fussiness of having to use arcane, finicky, and precisely defined commands to get the computer to do what we want it to do, i.e. solve some problem, has a benefit that most people overlook: it forces the programmer to meticulously break down and analyse the problem being solved. That is, it enforces mental discipline and clear thinking leading to in depth understanding of the problem being solved. This in turn forces us to jettison preconceived notions, intellectual prejudices, and errors arising from logical shortcuts.

If computers somehow are made to think like humans (which I think will never happen, at best they can be made to appear as if they think like humans.), won’t they make the same mental mistakes that humans make? For example, intuition is an amazing mental process. It enables us to come up with completely unexpected but highly effective solutions to problems. But it can also lead to horrendous blunders. Do we really want computers to be making such blunders? Instead of computers being a machine that forces us to be disciplined thinkers, it becomes a machine that commits the same mental errors that humans are prone to.

I understand. The thing is as you stated:

“precisely defined commands to get the computer to do what we want it to do, i.e. solve some problem, has a benefit that most people overlook: it forces the programmer to meticulously break down and analyse the problem being solved”

In the Voice First world driven by AI and dynamic learning, it is precisely the computer that “meticulously break down and analyse the problem being solved” the computer has far more discipline,less distractions and dynamic notions with no intellectual prejudices.

We can drag a garden hoe through a field or we can sit atop a combine in a cubicle that computer controls the lines being cut with GPS+ accuracy based on 10 data points like exact elevation and 100 year flood plains. We are moving from the field and to the top of the combine with Voice First + AI.

Computers are not being made to think like humans, as you stated this will likely and hopefully never happen. Computers will learn to understand us, rather then like I stated in the last 60 years we were forced to think like them. Just like the farmer, we can remove ourselves from the manual labor of typing and and viewing to stating what we need and letting the computer decode volition and intent. Over time this understanding grows. We get about the business of hopefully doing better things.

“Over time this understanding grows.” And in the meantime we allow the computer to make mistakes as it “learns”? Language evolves, and even if the computer learns the current meaning of words and phrases, what if a word meaning shifts and the user is using the new meaning while the computer understands it to be the old? “Hook up” is an example of a phrase whose meaning has shifted a non-trivial way. (Although it’s probably not relevant in the context of interfacing with a computer. At least I hope so.)

What if the mistake resulting from the computer misunderstanding the user has severe consequences? The precaution to take, of course. is not to issue any command using the word in it’s new meaning because the computer will misunderstand you. But then that’s back to us “thinking like computers rather than computers thinking like us.” The old way though, we probably have a clearer idea of whether or not the computer precisely understands the commands we issued, the new way being proposed, which I presume, perhaps mistakenly, is all about natural language commands, we’ll always wonder whether a new command we issue will be precisely understood.

This statement “stating what we need and letting the computer decode volition and intent” is, I think, not truly attainable. At best we can only make a very good simulation of it.

I appreciate your insights.

You and I use the same system Al uses. In fact, the hardest task humans overcome with no “intelligence” is the profoundly almost impossible task of learning to walk. It is pure try and fail and try on a unprecedented scale that many will never achieve again in their lives. Learning to walk is extremely dangerous and unfortunately costs 1000s of lives each year. Yet each of us born with functioning brains and legs indeed over came the odds and obstacles and learned to walk. Ask anyone who lost the ability to remember how to walk just how monumental the task is later in life. Learning is and always will be limited scope trial and error.

I do not advise any Intelligent Agent using AI let wild without any guidelines lose in any environment other then simple learning. This means the human becomes comfortable with the scope of what the Intelligent Agent and AI is doing. In any system one can speculate about the worse case, the doom and gloom. In fact this very argument has been used against computers since the dawn of the computer age. We hear about “human” error on the misplacement of a comma in a bank account, about how dates are handled after the year 2000. Books have been written both science fact and science faction on how the simple computer could get a “bug’ (literally) and cause havoc. You and I ignore this nonsense today.

We will not be making new commands in the Voice First world, the Intelligent Agents will 24/7 learn about us and our volition and intent. This is not trivial nor is it insurmountable. It is not 20 years away but in many ways here and now in the garages of many inventors I know today. Commands are a vestige of us learning to be like the machine and in time will become like the punch card and mag tape drives of the past. You in I will talk about what we want to get done or what we want to know, we will not reach for an adjustable wrench and open up the hood of the metaphorical car engine and adjust the carburetor.

As per your assertion: “This statement “stating what we need and letting the computer decode volition and intent” is, I think, not truly attainable. At best we can only make a very good simulation of it.”

Indeed this is correct, my thesis is not about a “Singularity”. Of course it is a simulation of the human. I feel strongly that humans will define the future for a very long time. The computer will aspire to be more like us.

“As each paradigm replaced the last, productivity and utility. In some cases…”

I feel like there is a word or words missing there. Admittedly, it could be my reading comprehension that’s missing.

Joe

Indeed, it was short a word. Should be: “As each paradigm replaced the last, productivity and utility increases”

Interesting, thank you.

I’m not sure what to think of the probability of rapid technical and business success of that stuff. I’m not a huge AI/bot fan, nor a huge speech-as-a-UI fan, but that’s maybe colored by experiences with early AI/bots and speech recog/synth. It’d be fun to see those actually become competent, 20 years on, but I still can’t bear the “read aloud” function of my PC and ebook reader.

What about privacy with voice first payment ?

Awesome! Its genuinely remarkable post, I have got much clear idea regarding from this post.

Great information shared.. really enjoyed reading this post thank you author for sharing this post .. appreciated

Good post! We will be linking to this particularly great post on our site. Keep up the great writing

For the reason that the admin of this site is working, no uncertainty very quickly it will be renowned, due to its quality contents.

very informative articles or reviews at this time.

naturally like your web site however you need to take a look at the spelling on several of your posts. A number of them are rife with spelling problems and I find it very bothersome to tell the truth on the other hand I will surely come again again.

That’s good, but I still don’t understand the purpose of this page posting, no or what and where do they get material like this.

This is my first time pay a quick visit at here and i am really happy to read everthing at one place

Great information shared.. really enjoyed reading this post thank you author for sharing this post .. appreciated

Nice post. I learn something totally new and challenging on websites

Pretty! This has been a really wonderful post. Many thanks for providing these details.

Nice post. I learn something totally new and challenging on websites

I do not even understand how I ended up here, but I assumed this publish used to be great

I just like the helpful information you provide in your articles

Cool that really helps, thank you.

Nice post. I learn something totally new and challenging on websites

very informative articles or reviews at this time.

This was beautiful Admin. Thank you for your reflections.

This is my first time pay a quick visit at here and i am really happy to read everthing at one place

Awesome! Its genuinely remarkable post, I have got much clear idea regarding from this post.

I do not even understand how I ended up here, but I assumed this publish used to be great

Hi there to all, for the reason that I am genuinely keen of reading this website’s post to be updated on a regular basis. It carries pleasant stuff.

This was beautiful Admin. Thank you for your reflections.

You’re so awesome! I don’t believe I have read a single thing like that before. So great to find someone with some original thoughts on this topic. Really.. thank you for starting this up. This website is something that is needed on the internet, someone with a little originality!

Very well presented. Every quote was awesome and thanks for sharing the content. Keep sharing and keep motivating others.

Pretty! This has been a really wonderful post. Many thanks for providing these details.

Pretty! This has been a really wonderful post. Many thanks for providing these details.

I am truly thankful to the owner of this web site who has shared this fantastic piece of writing at at this place.

Nice post. I learn something totally new and challenging on websites

I truly appreciate your technique of writing a blog. I added it to my bookmark site list and will

Very well presented. Every quote was awesome and thanks for sharing the content. Keep sharing and keep motivating others.

I just like the helpful information you provide in your articles

Awesome! Its genuinely remarkable post, I have got much clear idea regarding from this post

I very delighted to find this internet site on bing, just what I was searching for as well saved to fav

Nice post. I learn something totally new and challenging on websites

Try to slowly read the articles on this website, don’t just comment, I think the posts on this page are very helpful, because I understand the intent of the author of this article.

This was beautiful Admin. Thank you for your reflections.

https://www.google.rs/url?sa=j&url=https://bambu4d.com

I very delighted to find this internet site on bing, just what I was searching for as well saved to fav

I really like reading through a post that can make men and women think. Also, thank you for allowing me to comment!

Awesome! Its genuinely remarkable post, I have got much clear idea regarding from this post.

Hi there to all, for the reason that I am genuinely keen of reading this website’s post to be updated on a regular basis. It carries pleasant stuff.

naturally like your web site however you need to take a look at the spelling on several of your posts. A number of them are rife with spelling problems and I find it very bothersome to tell the truth on the other hand I will surely come again again.

I think the content you share is interesting, but for me there is still something missing, because the things discussed above are not important to talk about today.

I just like the helpful information you provide in your articles

This was beautiful Admin. Thank you for your reflections.

Pretty! This has been a really wonderful post. Many thanks for providing these details.

Cool that really helps, thank you.

I’m often to blogging and i really appreciate your content. The article has actually peaks my interest. I’m going to bookmark your web site and maintain checking for brand spanking new information.

Hi there to all, for the reason that I am genuinely keen of reading this website’s post to be updated on a regular basis. It carries pleasant stuff.

Hello colleagues, how is everything, and what you want

That’s good, but I still don’t understand the purpose of this page posting, no or what and where do they get material like this.

naturally like your web site however you need to take a look at the spelling on several of your posts. A number of them are rife with spelling problems and I find it very bothersome to tell the truth on the other hand I will surely come again again.

This is my first time pay a quick visit at here and i am really happy to read everthing at one place

Hi there to all, for the reason that I am genuinely keen of reading this website’s post to be updated on a regular basis. It carries pleasant stuff.

I think this post makes sense and really helps me, so far I’m still confused, after reading the posts on this website I understand.

It’s really a nice and useful piece of info. I’m glad that you shared this useful info with us. Please keep us informed like this. Thanks for sharing.

Pretty! This has been a really wonderful post. Many thanks for providing these details.

Very helpful, Don’t forget to visit my website Bambu4d

Don’t forget to watch videos on the YouTube channel Bambu4d

Try to slowly read the articles on this website, don’t just comment, I think the posts on this page are very helpful, because I understand the intent of the author of this article.

I think the content you share is interesting, but for me there is still something missing, because the things discussed above are not important to talk about today.

You’re so awesome! I don’t believe I have read a single thing like that before. So great to find someone with some original thoughts on this topic. Really.. thank you for starting this up. This website is something that is needed on the internet, someone with a little originality!

Pretty! This has been a really wonderful post. Many thanks for providing these details.

Hi! I just wanted to ask if you ever have any issues with hackers? My last blog (wordpress) was hacked and I ended up losing months of hard work due to no back up. Do you have any solutions to stop hackers?

In the great scheme of things you’ll receive a B- just for effort and hard work. Where exactly you confused me was first in the details. As they say, the devil is in the details… And that couldn’t be much more true in this article. Having said that, permit me inform you exactly what did work. The text is definitely highly powerful and that is possibly why I am taking an effort in order to comment. I do not really make it a regular habit of doing that. Next, even though I can certainly see a leaps in reason you come up with, I am not necessarily confident of how you appear to unite the details which inturn produce your conclusion. For now I will yield to your point but hope in the future you actually connect your dots much better.

Yet another issue is really that video gaming has become one of the all-time greatest forms of fun for people of nearly every age. Kids participate in video games, plus adults do, too. The actual XBox 360 has become the favorite video games systems for individuals that love to have a huge variety of video games available to them, in addition to who like to experiment with live with other folks all over the world. Thank you for sharing your opinions.

This was beautiful Admin. Thank you for your reflections.

It?s really a great and helpful piece of information. I?m glad that you shared this useful information with us. Please keep us informed like this. Thanks for sharing.

There is definately a lot to find out about this subject. I like all the points you made

I?m impressed, I have to say. Actually hardly ever do I encounter a blog that?s both educative and entertaining, and let me inform you, you have hit the nail on the head. Your concept is outstanding; the issue is something that not sufficient persons are talking intelligently about. I’m very completely happy that I stumbled across this in my seek for something referring to this.

I’m often to blogging and i really appreciate your content. The article has actually peaks my interest. I’m going to bookmark your web site and maintain checking for brand spanking new information.

of course like your website but you need to check the spelling on quite a few of your posts. Several of them are rife with spelling issues and I find it very troublesome to tell the truth nevertheless I?ll definitely come back again.

I like the efforts you have put in this, regards for all the great content.

Hey very cool website!! Man .. Beautiful .. Amazing .. I will bookmark your blog and take the feeds also?I’m happy to find so many useful information here in the post, we need develop more strategies in this regard, thanks for sharing. . . . . .

Thanks for your article. One other thing is that individual American states have their own personal laws of which affect home owners, which makes it extremely tough for the Congress to come up with a fresh set of rules concerning property foreclosures on people. The problem is that a state provides own legal guidelines which may have impact in a damaging manner with regards to foreclosure plans.

I was just seeking this info for a while. After 6 hours of continuous Googleing, finally I got it in your website. I wonder what’s the lack of Google strategy that do not rank this kind of informative sites in top of the list. Normally the top web sites are full of garbage.

I do like the manner in which you have presented this specific situation plus it really does give me a lot of fodder for thought. On the other hand, from everything that I have observed, I only trust as the comments pile on that people today continue to be on point and in no way embark on a soap box involving the news du jour. Yet, thank you for this fantastic piece and whilst I can not really go along with this in totality, I respect your perspective.

This will be a terrific web site, could you be involved in doing an interview regarding how you designed it? If so e-mail me!

Good post! We will be linking to this particularly great post on our site. Keep up the great writing

Thanks for another informative site. Where else may just I am getting that kind of info written in such an ideal method? I’ve a mission that I’m just now operating on, and I’ve been at the glance out for such info.

There is definately a lot to find out about this subject. I like all the points you made

Thanks for your post on the traveling industry. I would also like to add that if you are one senior thinking of traveling, it is absolutely vital that you buy travel cover for retirees. When traveling, older persons are at greatest risk of getting a healthcare emergency. Having the right insurance cover package on your age group can protect your health and provide you with peace of mind.

it’s awesome article. I look forward to the continuation.

Thank you for the good writeup. It in fact was a amusement account it. Look advanced to more added agreeable from you! By the way, how can we communicate?

F*ckin? remarkable things here. I?m very glad to see your post. Thanks a lot and i am looking forward to contact you. Will you kindly drop me a mail?

I have observed that costs for on-line degree pros tend to be a fantastic value. Like a full Bachelor’s Degree in Communication with the University of Phoenix Online consists of 60 credits with $515/credit or $30,900. Also American Intercontinental University Online comes with a Bachelors of Business Administration with a entire course requirement of 180 units and a price of $30,560. Online degree learning has made taking your higher education degree much simpler because you can earn your degree through the comfort of your abode and when you finish working. Thanks for all your other tips I have really learned through the web site.

I just wanted to construct a small message so as to thank you for the amazing tactics you are giving out on this website. My time intensive internet look up has finally been honored with beneficial know-how to exchange with my contacts. I ‘d state that that we readers are rather lucky to exist in a magnificent website with so many brilliant individuals with useful guidelines. I feel quite happy to have used the web page and look forward to plenty of more entertaining minutes reading here. Thanks again for everything.

Try to slowly read the articles on this website, don’t just comment, I think the posts on this page are very helpful, because I understand the intent of the author of this article.

I?ve been exploring for a little for any high quality articles or blog posts on this sort of area . Exploring in Yahoo I at last stumbled upon this website. Reading this info So i am happy to convey that I’ve a very good uncanny feeling I discovered exactly what I needed. I most certainly will make sure to do not forget this website and give it a look on a constant basis.

After research just a few of the weblog posts in your web site now, and I really like your way of blogging. I bookmarked it to my bookmark website record and will be checking again soon. Pls try my web site as effectively and let me know what you think.

Interesting post here. One thing I would like to say is the fact most professional career fields consider the Bachelor’s Degree as the entry level requirement for an online college diploma. Even though Associate Certification are a great way to begin, completing your own Bachelors uncovers many good opportunities to various occupations, there are numerous internet Bachelor Diploma Programs available via institutions like The University of Phoenix, Intercontinental University Online and Kaplan. Another concern is that many brick and mortar institutions present Online variants of their college diplomas but typically for a drastically higher fee than the institutions that specialize in online qualification plans.

Thanks for a marvelous posting! I genuinely enjoyed reading it, you are a great author.I will always bookmark your blog and will often come back down the road. I want to encourage continue your great job, have a nice weekend!

Cool that really helps, thank you.

I have seen that right now, more and more people are now being attracted to surveillance cameras and the field of images. However, as a photographer, you will need to first devote so much of your time deciding the exact model of camera to buy in addition to moving via store to store just so you might buy the most affordable camera of the trademark you have decided to decide on. But it isn’t going to end generally there. You also have to take into consideration whether you can purchase a digital digital camera extended warranty. Thanks a bunch for the good suggestions I accumulated from your weblog.

it’s awesome article. I look forward to the continuation.

I just like the helpful information you provide in your articles

Nice blog right here! Additionally your website so much up fast! What web host are you the use of? Can I get your affiliate link in your host? I want my website loaded up as fast as yours lol

I can’t express how much I appreciate the effort the author has put into producing this outstanding piece of content. The clarity of the writing, the depth of analysis, and the abundance of information presented are simply impressive. Her enthusiasm for the subject is obvious, and it has certainly made an impact with me. Thank you, author, for sharing your wisdom and enriching our lives with this exceptional article!

Hey there are using WordPress for your blog platform? I’m new to the blog world but I’m trying to get started and create my own. Do you need any html coding expertise to make your own blog? Any help would be really appreciated!

Just desire to say your article is as amazing. The clarity to your publish is just spectacular and i can think you’re a professional on this subject. Fine along with your permission let me to grasp your RSS feed to stay up to date with imminent post. Thank you 1,000,000 and please continue the enjoyable work.

plate suculente

I gotta favorite this web site it seems very helpful invaluable

A powerful share, I simply given this onto a colleague who was doing somewhat evaluation on this. And he in reality bought me breakfast because I discovered it for him.. smile. So let me reword that: Thnx for the deal with! But yeah Thnkx for spending the time to discuss this, I feel strongly about it and love reading extra on this topic. If possible, as you develop into experience, would you thoughts updating your weblog with extra details? It is highly useful for me. Big thumb up for this weblog post!

Generally I do not learn article on blogs, but I wish to say that this write-up very compelled me to take a look at and do it! Your writing taste has been surprised me. Thanks, quite great post.

I’m often to blogging and i really appreciate your content. The article has actually peaks my interest. I’m going to bookmark your web site and maintain checking for brand spanking new information.

This is really interesting, You’re a very skilled blogger. I’ve joined your feed and look forward to seeking more of your magnificent post. Also, I’ve shared your site in my social networks!

I like what you guys tend to be up too. This kind of clever work and reporting! Keep up the fantastic works guys I’ve you guys to our blogroll.

whoah this blog is excellent i love reading your posts. Keep up the good work! You know, lots of people are hunting around for this info, you could help them greatly.

Its like you learn my mind! You appear to understand a lot approximately this, such as you wrote the book in it or something. I feel that you simply can do with a few p.c. to pressure the message home a bit, however instead of that, this is magnificent blog. An excellent read. I will definitely be back.

I am very happy to read this. This is the kind of manual that needs to be given and not the random misinformation that is at the other blogs. Appreciate your sharing this best doc.

As I website owner I believe the content material here is really good appreciate it for your efforts.

actually awesome in support of me.

бриллкс

Brillx

Но если вы готовы испытать настоящий азарт и почувствовать вкус победы, то регистрация на Brillx Казино откроет вам доступ к захватывающему миру игр на деньги. Сделайте свои ставки, и каждый спин превратится в захватывающее приключение, где удача и мастерство сплетаются в уникальную симфонию успеха!Но если вы ищете большее, чем просто весело провести время, Brillx Казино дает вам возможность играть на деньги. Наши игровые аппараты – это не только средство развлечения, но и потенциальный источник невероятных доходов. Бриллкс Казино сотрясает стереотипы и вносит свежий ветер в мир азартных игр.

Good post! We will be linking to this particularly great post on our site. Keep up the great writing

There is definately a lot to find out about this subject. I like all the points you made

Some truly quality articles on this website , saved to favorites.

Mybudgetart.com.au is Australia’s Trusted Online Wall Art Canvas Prints Store. We are selling art online since 2008. We offer 1000+ artwork designs, up-to 50 OFF store-wide, FREE Delivery Australia & New Zealand, and World-wide shipping.

Hi there to all, for the reason that I am genuinely keen of reading this website’s post to be updated on a regular basis. It carries pleasant stuff.

Hi! I’m at work surfing around your blog from my new iphone 4! Just wanted to say I love reading your blog and look forward to all your posts! Keep up the fantastic work!

Dead indited articles, Really enjoyed looking through.

Simply wish to say your article is as surprising. The clarity on your put up is just spectacular and i could suppose you’re a professional in this subject. Fine along with your permission allow me to clutch your feed to keep updated with impending post. Thanks one million and please carry on the rewarding work.

Great information shared.. really enjoyed reading this post thank you author for sharing this post .. appreciated

You actually make it seem so easy with your presentation but I find this matter to be actually something which I think I would never understand. It seems too complex and very broad for me. I’m looking forward for your next post, I?ll try to get the hang of it!

I¦ve read some just right stuff here. Definitely worth bookmarking for revisiting. I surprise how a lot attempt you put to create such a fantastic informative site.

Hey! Do you know if they make any plugins to protect against hackers? I’m kinda paranoid about losing everything I’ve worked hard on. Any suggestions?

Thanks a lot for the helpful article. It is also my opinion that mesothelioma has an incredibly long latency period of time, which means that warning signs of the disease may not emerge right until 30 to 50 years after the initial exposure to asbestos fiber. Pleural mesothelioma, which can be the most common sort and influences the area throughout the lungs, might cause shortness of breath, upper body pains, and a persistent cough, which may lead to coughing up our blood.

Keep working ,terrific job!

Thanks for the sensible critique. Me and my neighbor were just preparing to do some research on this. We got a grab a book from our local library but I think I learned more clear from this post. I’m very glad to see such great information being shared freely out there.

You are my inspiration , I possess few web logs and rarely run out from to post : (.

Good post! We will be linking to this particularly great post on our site. Keep up the great writing

Wow, marvelous blog structure! How lengthy have you been running a blog for? you make running a blog look easy. The overall glance of your website is great, let alone the content!

I think this is among the most vital information for me. And i’m glad reading your article. But want to remark on few general things, The web site style is perfect, the articles is really nice : D. Good job, cheers

Howdy! This post could not be written any better! Reading this post reminds me of my old room mate! He always kept chatting about this. I will forward this post to him. Fairly certain he will have a good read. Many thanks for sharing!

I got what you mean , thankyou for posting.Woh I am lucky to find this website through google.

You are my intake, I own few blogs and occasionally run out from post :). “‘Tis the most tender part of love, each other to forgive.” by John Sheffield.

Hey, you used to write magnificent, but the last several posts have been kinda boringK I miss your super writings. Past several posts are just a little bit out of track! come on!

There may be noticeably a bundle to know about this. I assume you made certain good points in options also.

I loved as much as you’ll receive carried out right here. The sketch is attractive, your authored material stylish. nonetheless, you command get bought an edginess over that you wish be delivering the following. unwell unquestionably come more formerly again as exactly the same nearly a lot often inside case you shield this hike.

Some really nice stuff on this site, I like it.

Once I initially commented I clicked the -Notify me when new comments are added- checkbox and now each time a remark is added I get four emails with the identical comment. Is there any manner you possibly can remove me from that service? Thanks!

Thanks for the ideas you have shared here. One more thing I would like to express is that laptop or computer memory demands generally go up along with other innovations in the know-how. For instance, if new generations of processor chips are introduced to the market, there is usually a related increase in the size and style calls for of both pc memory plus hard drive room. This is because the software program operated by means of these cpus will inevitably boost in power to leverage the new engineering.

Hey there! I’ve been reading your weblog for a while now and finally got the courage to go ahead and give you a shout out from Atascocita Texas! Just wanted to say keep up the great work!

It?s really a nice and useful piece of info. I am glad that you shared this helpful information with us. Please keep us up to date like this. Thanks for sharing.

https://hotclubdepiracicaba.com.br/post8/

I appreciate, cause I found just what I was looking for. You have ended my four day long hunt! God Bless you man. Have a nice day. Bye

Good day! I simply would like to give an enormous thumbs up for the great information you’ve here on this post. I will be coming again to your weblog for extra soon.

very informative articles or reviews at this time.

I’ve been surfing online more than three hours nowadays, yet I by no means found any fascinating article like yours. It is lovely price enough for me. Personally, if all site owners and bloggers made excellent content as you probably did, the web will likely be a lot more useful than ever before.

Hello my loved one! I want to say that this post is awesome, nice written and come with almost all vital infos. I?¦d like to look more posts like this .

I’ve been exploring for a bit for any high-quality articles or blog posts on this kind of area . Exploring in Yahoo I at last stumbled upon this website. Reading this information So i am happy to convey that I’ve an incredibly good uncanny feeling I discovered exactly what I needed. I most certainly will make sure to do not forget this web site and give it a glance regularly.

Thanks for sharing excellent informations. Your web site is so cool. I’m impressed by the details that you have on this website. It reveals how nicely you perceive this subject. Bookmarked this web page, will come back for more articles. You, my pal, ROCK! I found simply the info I already searched all over the place and simply could not come across. What a perfect web-site.

I just couldn’t depart your website before suggesting that I really enjoyed the standard info a person provide for your visitors? Is going to be back often in order to check up on new posts

Hi! I’m at work surfing around your blog from my new iphone 4! Just wanted to say I love reading your blog and look forward to all your posts! Carry on the outstanding work!

An attention-grabbing dialogue is price comment. I think that it’s best to write extra on this topic, it won’t be a taboo subject however generally persons are not sufficient to talk on such topics. To the next. Cheers

Keep up the excellent work, I read few content on this web site and I think that your website is rattling interesting and has got circles of superb information.

you’re truly a excellent webmaster. The website loading velocity is amazing. It seems that you are doing any unique trick. Moreover, The contents are masterpiece. you’ve performed a great job in this matter!

After examine just a few of the weblog posts on your web site now, and I truly like your method of blogging. I bookmarked it to my bookmark web site checklist and can be checking again soon. Pls check out my website as well and let me know what you think.

Very fantastic visual appeal on this website , I’d rate it 10 10.

to say concerning this paragraph, in my view its

You really make it appear so easy with your presentation but I find this matter to be actually one thing that I believe I’d never understand. It seems too complicated and very wide for me. I’m taking a look ahead on your next publish, I will try to get the cling of it!

Hi, i think that i saw you visited my blog thus i came to ?return the favor?.I’m trying to find things to enhance my site!I suppose its ok to use some of your ideas!!

Outstanding post however I was wanting to know if you could write a litte more on this subject? I’d be very thankful if you could elaborate a little bit more. Cheers!

Wow, awesome blog layout! How lengthy have you been running a blog for? you make running a blog look easy. The overall look of your site is magnificent, let alone the content!

I do not even understand how I ended up here, but I assumed this publish used to be great

Do you mind if I quote a couple of your posts as long as I provide credit and sources back to your site? My blog site is in the very same niche as yours and my users would genuinely benefit from some of the information you present here. Please let me know if this okay with you. Thanks!

Appreciate it for this post, I am a big big fan of this site would like to proceed updated.

You made some clear points there. I looked on the internet for the topic and found most individuals will go along with with your website.

Hello. splendid job. I did not imagine this. This is a fantastic story. Thanks!

I have been browsing on-line greater than 3 hours lately, yet I never found any fascinating article like yours. It is lovely price enough for me. In my opinion, if all website owners and bloggers made excellent content as you probably did, the internet might be a lot more helpful than ever before.

Sweet blog! I found it while browsing on Yahoo News. Do you have any tips on how to get listed in Yahoo News? I’ve been trying for a while but I never seem to get there! Thanks

Use the 1xBet promo code for registration : VIP888, and you will receive a €/$130 exclusive bonus on your first deposit. Before registering, you should familiarize yourself with the rules of the promotion.

Hi there! Someone in my Facebook group shared this website with us so I came to give it a look. I’m definitely loving the information. I’m bookmarking and will be tweeting this to my followers! Superb blog and brilliant style and design.

You should take part in a contest for one of the best blogs on the web. I will recommend this site!

You have brought up a very fantastic details , regards for the post.

Whats Going down i’m new to this, I stumbled upon this I have found It positively useful and it has aided me out loads. I am hoping to contribute & aid other users like its aided me. Good job.

This is really interesting, You are a very skilled blogger. I’ve joined your rss feed and look forward to seeking more of your fantastic post. Also, I’ve shared your web site in my social networks!

You are a very smart person!

whoah this blog is great i love studying your posts. Keep up the great paintings! You know, a lot of persons are searching round for this info, you could aid them greatly.

Hiya, I am really glad I’ve found this information. Nowadays bloggers publish just about gossips and web and this is actually irritating. A good site with interesting content, that’s what I need. Thank you for keeping this web site, I’ll be visiting it. Do you do newsletters? Can not find it.

You can certainly see your enthusiasm within the work you write. The sector hopes for even more passionate writers like you who aren’t afraid to say how they believe. At all times follow your heart. “The point of quotations is that one can use another’s words to be insulting.” by Amanda Cross.

http://www.thebudgetart.com is trusted worldwide canvas wall art prints & handmade canvas paintings online store. Thebudgetart.com offers budget price & high quality artwork, up-to 50 OFF, FREE Shipping USA, AUS, NZ & Worldwide Delivery.

There is definately a lot to find out about this subject. I like all the points you made

Can I just say what a aid to search out somebody who actually is aware of what theyre speaking about on the internet. You definitely know the best way to bring a problem to gentle and make it important. More people have to read this and perceive this aspect of the story. I cant consider youre no more popular because you positively have the gift.

This is really interesting, You’re a very skilled blogger. I’ve joined your feed and look forward to seeking more of your magnificent post. Also, I’ve shared your site in my social networks!

I’m now not certain the place you’re getting your information, however great topic. I must spend some time studying more or figuring out more. Thank you for great information I was searching for this info for my mission.

Hello! I could have sworn I’ve been to this blog before but after browsing through some of the post I realized it’s new to me. Anyways, I’m definitely happy I found it and I’ll be book-marking and checking back frequently!

Keep working ,terrific job!

Hello! I just would like to give a huge thumbs up for the great info you have here on this post. I will be coming back to your blog for more soon.

Great site. Lots of useful information here. I am sending it to some buddies ans also sharing in delicious. And of course, thanks for your effort!

Awesome! Its genuinely remarkable post, I have got much clear idea regarding from this post

Nice post. I learn something more challenging on different blogs everyday. It will always be stimulating to read content from other writers and practice a little something from their store. I’d prefer to use some with the content on my blog whether you don’t mind. Natually I’ll give you a link on your web blog. Thanks for sharing.

Thanks for the write-up. My spouse and i have continually noticed that almost all people are eager to lose weight when they wish to look slim as well as attractive. However, they do not constantly realize that there are other benefits for losing weight in addition. Doctors assert that overweight people have problems with a variety of ailments that can be perfectely attributed to their own excess weight. The good thing is that people who are overweight along with suffering from different diseases are able to reduce the severity of their illnesses by means of losing weight. It is possible to see a continuous but notable improvement in health while even a negligible amount of weight-loss is realized.

You completed certain fine points there. I did a search on the subject matter and found mainly persons will agree with your blog.

You have noted very interesting points! ps decent web site. “Formal education will make you a living self-education will make you a fortune.” by Jim Rohn.

I gotta favorite this web site it seems very helpful invaluable

he blog was how do i say it… relevant, finally something that helped me. Thanks

Hi there, I found your blog via Google while looking for a related topic, your web site came up, it looks good. I’ve bookmarked it in my google bookmarks.

Hello! I’ve been following your blog for some time now and finally got the courage to go ahead and give you a shout out from Houston Texas! Just wanted to mention keep up the great job!

Of course, what a magnificent blog and enlightening posts, I definitely will bookmark your site.All the Best!

What i don’t understood is in reality how you are no longer actually much more neatly-preferred than you might be right now. You’re so intelligent. You already know thus considerably in terms of this topic, made me in my opinion consider it from numerous various angles. Its like women and men don’t seem to be interested except it?s one thing to do with Lady gaga! Your own stuffs nice. At all times care for it up!

This design is incredible! You certainly know how to keep a reader amused. Between your wit and your videos, I was almost moved to start my own blog (well, almost…HaHa!) Fantastic job. I really enjoyed what you had to say, and more than that, how you presented it. Too cool!

I gotta bookmark this web site it seems invaluable handy

Some truly superb information, Gladiolus I discovered this.

I like the helpful info you provide to your articles. I will bookmark your weblog and test once more right here frequently. I am fairly certain I?ll be informed plenty of new stuff proper right here! Good luck for the next!

This was beautiful Admin. Thank you for your reflections.

Thanks for the auspicious writeup. It in reality was once a leisure account it. Glance complex to far brought agreeable from you! However, how could we keep in touch?

Hey! Do you use Twitter? I’d like to follow you if that would be ok. I’m undoubtedly enjoying your blog and look forward to new posts.

Magnificent site. Plenty of useful information here. I am sending it to a few friends ans also sharing in delicious. And obviously, thanks for your effort!

Great blog! Do you have any tips and hints for aspiring writers? I’m planning to start my own site soon but I’m a little lost on everything. Would you suggest starting with a free platform like WordPress or go for a paid option? There are so many options out there that I’m totally overwhelmed .. Any suggestions? Appreciate it!

you’re really a excellent webmaster. The website loading velocity is incredible. It seems that you are doing any distinctive trick. In addition, The contents are masterwork. you have done a fantastic task in this topic!

Absolutely indited articles, Really enjoyed reading through.