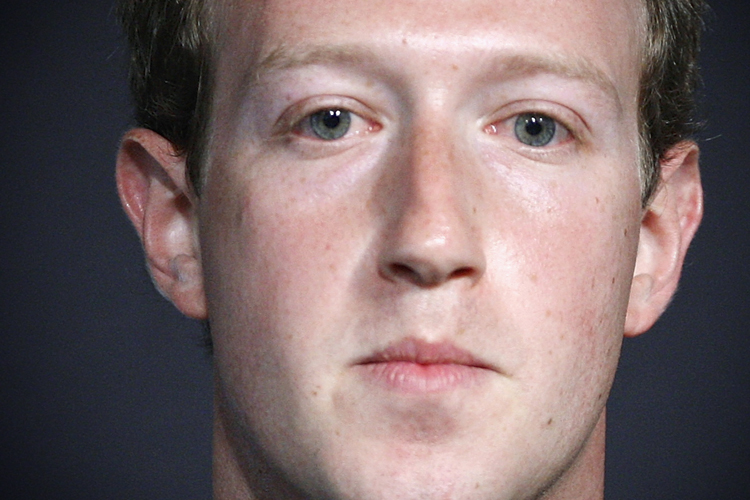

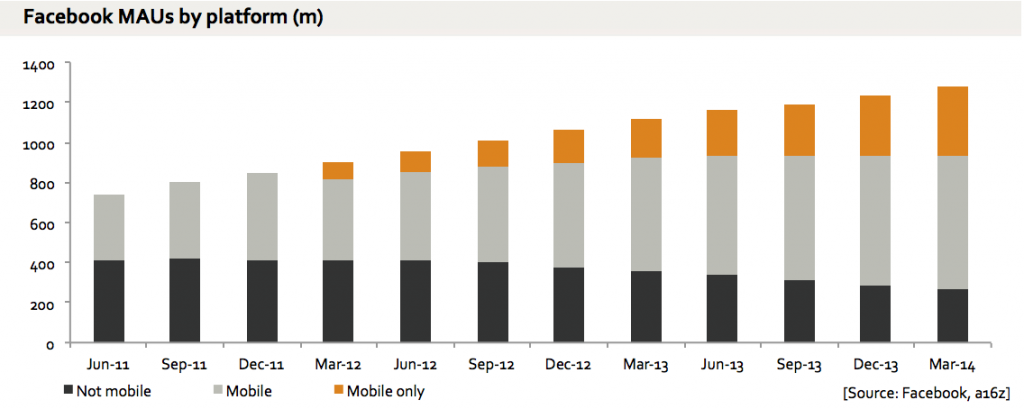

Last week during Mark Zuckerberg’s congressional hearing we heard Artificial Intelligence (AI) mentioned time and time again as the one size fits all solution to Facebook’s problems of hate speech, harassment, fake news… Sadly though, many agree with me that we are a long way away from AI to be able to eradicate all that is bad on the internet.

Abusive language and behavior are very hard to detect, monitor, and predict. As Zuckerberg himself pointed out, there are so many different factors that play into making this particular job hard: language, culture, context, all play a role in helping us determine if what we hear, read or see is to be deemed offensive or not.

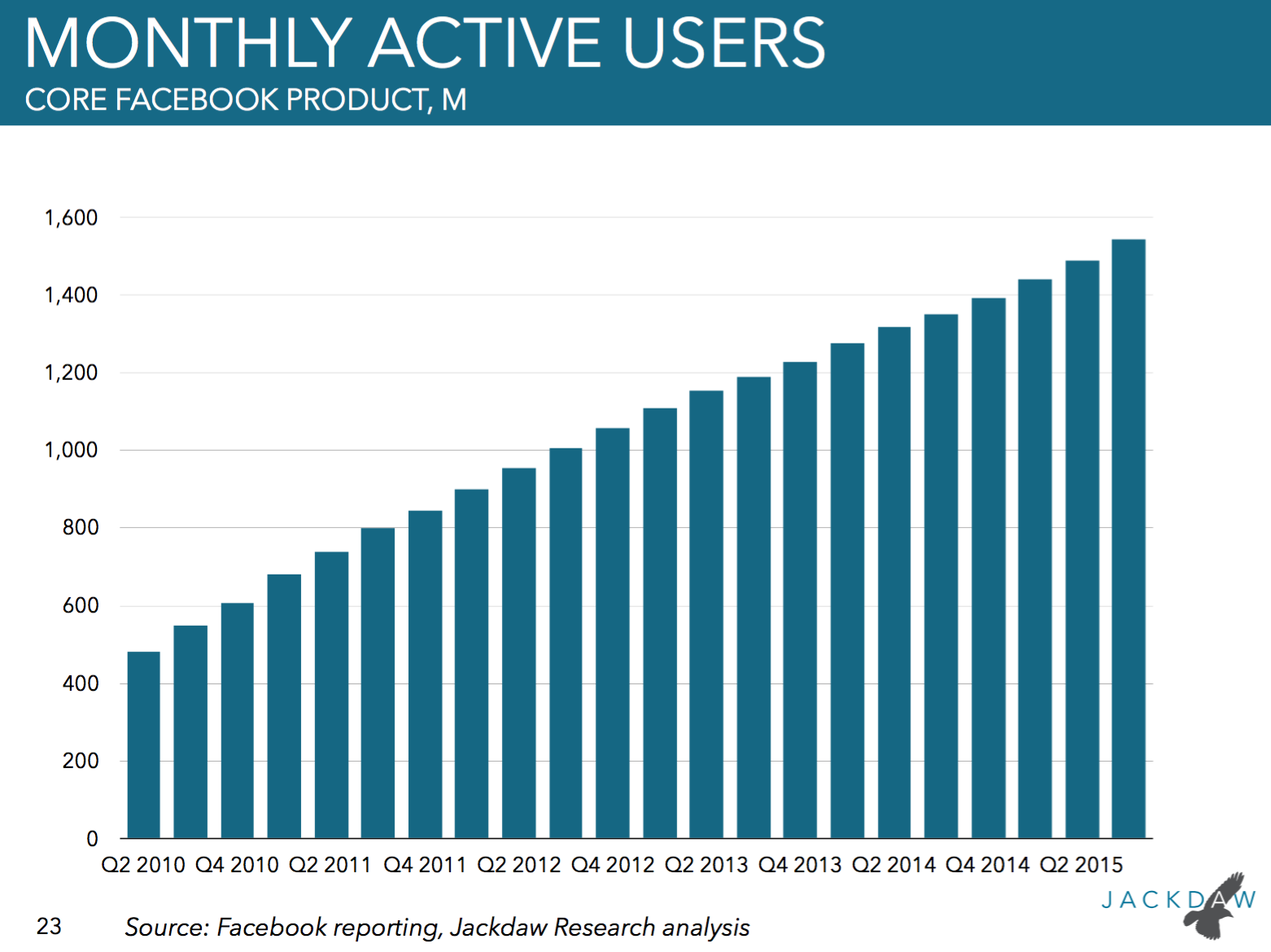

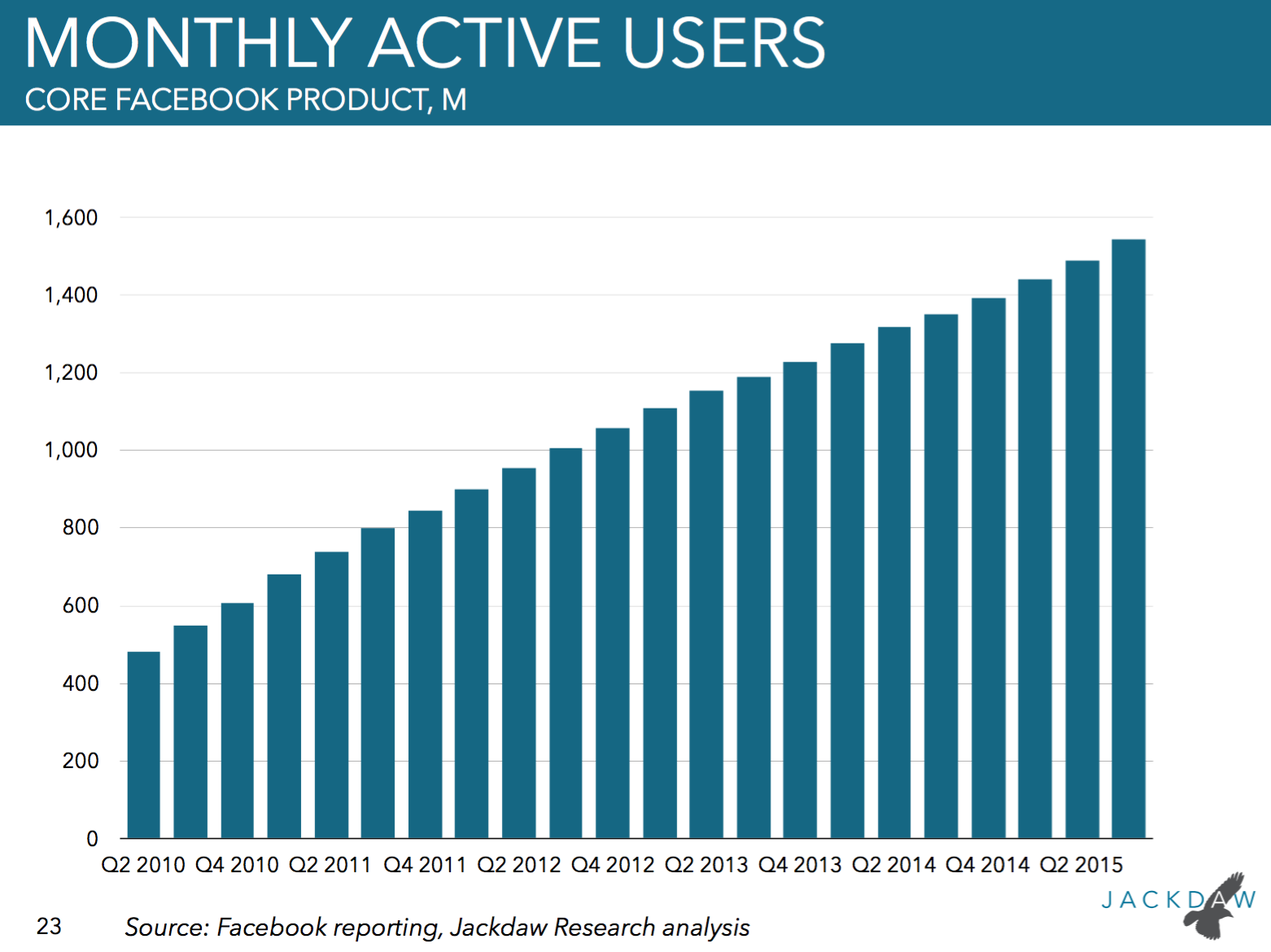

The problem that we have today with most platforms, not just Facebook, is that humans are determining what is offensive. They might be using a set of parameters to do so, but they ultimately use their judgment. Hence consistency is an issue. Employing humans also makes it much harder to scale. Zuckerberg’s 20,000 people number sure is impressive, but when you think about the content that 2 billion active users can post in an hour, you can see how futile even that effort seems.

I don’t want to get into a discussion of how Zuckerberg might have used the promise of AI as a red herring to get some pressure off his back. But I do want to look at why, while AI can solve scalability, its consistency and accuracy in detecting hate speech in the first place is highly questionable today.

The “feed It Enough Data” Argument

Before we can talk about AI and its potential benefits we need to talk about Machine Learning (ML). For machines to be able to reason like a human, or hopefully better, they need to be able to learn. We teach the machines by using algorithms that discover patterns and generate insights from a massive amount of data they are exposed to so that they can make decisions on their own in the future. If we input enough pictures and descriptions of dogs and hand-code the software with what could look like a dog or be described as a dog, the machine will eventually be able to establish and recognize the next engineered “doodle” as a dog.

So one would think that if you feed a machine enough swear words, racial, religious or sexual slurs, it would be able to, not only detect, but also predict toxic content going forward. The problem is that there is a lot of hate speech out there that uses very polite words as there is harmless content that is loaded with swear words. Innocuous words such as “animals” or “parasites” can be charged with hate when directed to a specific group,of people. Users engaging in hate speech might also misspell words or use symbols instead of letters all aimed at preventing keywords-based filters to catch them.

Furthermore, training the machine is still a process that involves humans and consistency on what is offensive is hard to achieve. According to a study published by Kwok and Wang in 2013, there is a mere 33% agreement between coders from different races, when tasked to identify racist tweets.

In 2017, Jigsaw, a company operated by Alphabet, released an API called Perspective that uses machine learning to spot abuse and harassment online and is available to developers. Perspective created a “toxicity score” for the comments that were available based on keywords and phrases and then predicted content based on such score. The results were not very encouraging. According to New Scientist

“you’re pretty smart for a girl” was deemed 18% similar to comments people had deemed toxic, whereas “I love Fuhrer” was 2% similar.

The “feed It the Right Data” Argument

So, it seems that it is not about the amount of data but rather, about the right kind of data, but how do we get to it? Haji Mohammad Saleem and his team at the University of McGill, in Montreal, tried a different approach.

They focused on the content on Reddit that they defined as “a major online home for both hateful speech communities and supporters for their target groups.” Access to a large amount of data from groups that are now banned on Redditt allowed the McGill’s team to analyze linguistic practices that hate groups share thus avoiding having to compile word lists and providing a large amount of data to train and test the classifiers. Their method resulted in fewer false positives, but it is still not perfect.

Some researchers believe that AI will never be able to be totally effective in catching toxic language as this is subjective and requires human judgment.

Minimizing Human Bias

Whether humans will be involved in coding or will remain mostly responsible for policing hate speech, it is really human bias that I am concerned about. This is different than talking about approach consistency that considers cultural, language and context nuances. This is about having humans’ personal beliefs creep into their decisions when they are coding the machines or monitoring content. Try and search for “bad hair” and see how many images of beautifully crafted hair designs for Black women show up in your results. That, right there, is human bias creeping into an algorithm.

This is precisely why I have been very vocal about the importance of representation across tech overall but in particular when talking about AI. If we have a fair representation of gender, race, religious and political believes and sexual orientation among the people trusted to teach the machines we will entrust with different kind of tasks, we will have a better chance at minimising bias.

Even when we eliminate bias at the best of our ability we would be deluded to believe Zuckerberg’s rosy picture of the future. Hate speech, fake news, toxic behavior change all the time making the job of training machines a never-ending one. Ultimately, accountability rests with platforms owners and with us as users. Humanity needs to save itself not wait for AI.